Chapter 11 Comparing two means

In the previous chapter, we covered the situation when your outcome variable is a nominal scale, and your predictor variable is also a nominal scale. Many real-world situations have that character, so you’ll find that chi-square tests are widely used.

However, you’re much more likely to find yourself in a situation where your outcome variable is interval scale or higher, and you’re interested in whether the outcome variable’s average value is higher in one group or another. For instance, a psychologist might want to know if anxiety levels are higher among parents than non-parents; or if working memory capacity is reduced by listening to music (relative to not listening to music). In a medical context, we might want to know if a new drug increases or decreases blood pressure. An agricultural scientist might want to know whether adding phosphorus to Australian native plants will kill them. In all these situations, our outcome variable is a continuous interval or ratio scale variable; and our predictor is a binary “grouping” variable. In other words, we want to compare the means of the two groups.

The standard answer to the problem of comparing means is to use a -test, which has several varieties depending on exactly what question you want to solve. As a consequence, the majority of this chapter focuses on different types of -test:

- one-sample -tests are discussed in Chapter 11.2,

- independent samples -tests are discussed in Sections 11.3 and 11.4, and

- paired samples -tests are discussed in Chapter 11.5.

After that, we’ll talk a bit about Cohen’s , which is the standard measure of effect size for a -test (Section 11.6).

The later sections of the chapter focus on the assumptions of the -tests and possible remedies if they are violated. However, before discussing any of these useful things, we’ll start with a discussion of the -test.

11.1 The one-sample -test

In this section, we’ll discuss one of the most useless tests in all of statistics: the -test. Seriously – this test is seldom used in real life. Its only real purpose is that, when teaching statistics, it’s a very convenient stepping stone towards the -test, which is probably the most (over)used tool in all statistics.

Let’s use a simple example to introduce the idea behind the -test. A friend of Danielle’s, Dr Zeppo, grades his introductory statistics class on a curve. Let’s suppose that the average grade in his class is 67.5, and the standard deviation is 9.5. Of his many hundreds of students, it turns out that 20 of them also take psychology classes. Out of curiosity: do psychology students tend to get the same grades as everyone else (i.e. mean 67.5), or do they tend to score higher or lower? Let’s explore this by looking at the zeppo.csv data file by loading the file to CogStat.

Figure 11.1: The zeppo.csv data file loaded into CogStat.

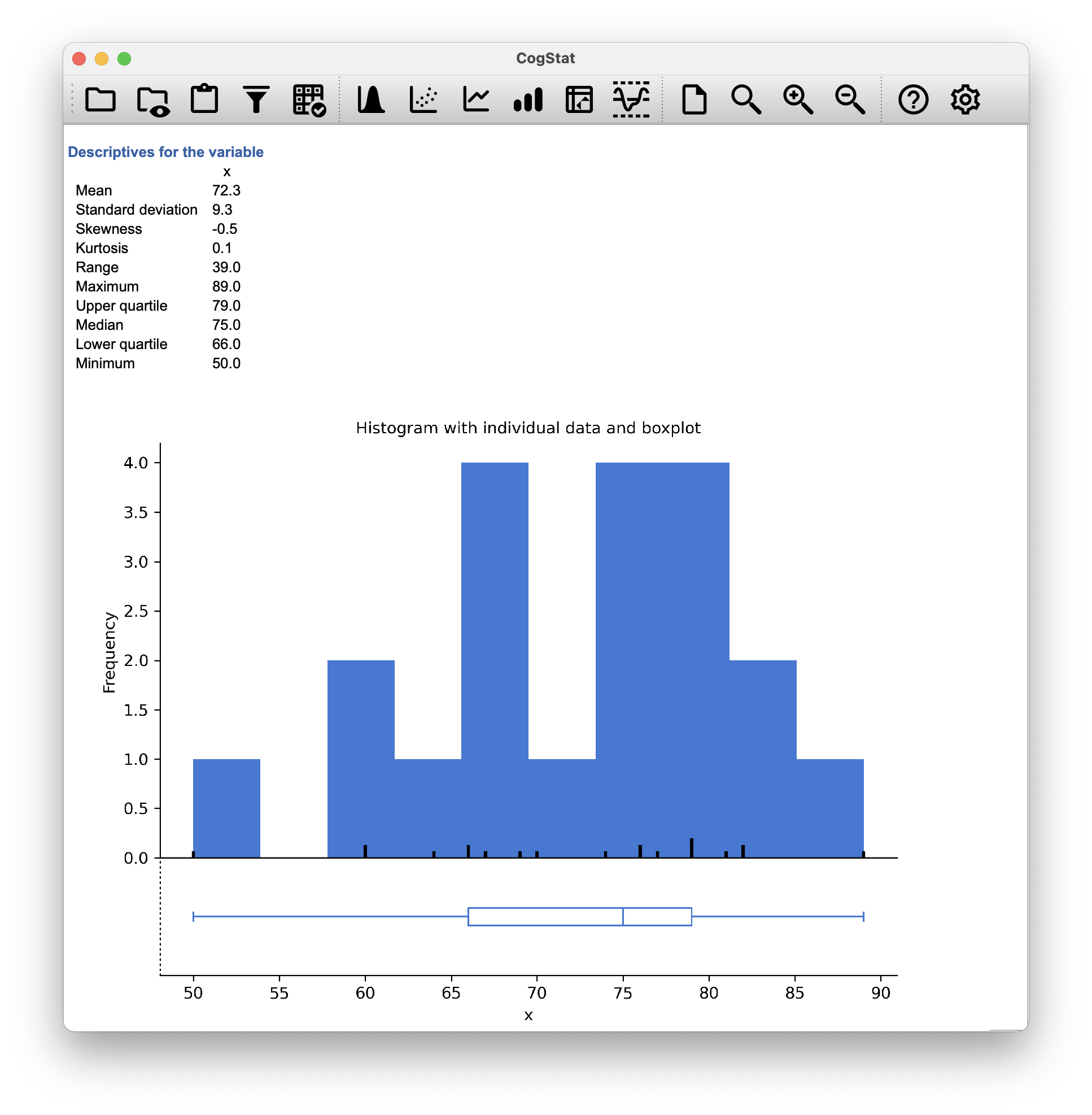

Let’s explore the data a bit by looking at some summary statistics with the Explore variable function.

Figure 11.2: The Explore variable function in CogStat.

The mean is . Hm. It might be that the psychology students are scoring a bit higher than normal: that sample mean of is a fair bit higher than the hypothesised population mean of , but on the other hand, a sample size of isn’t all that big. Maybe it’s pure chance.

To answer the question, it helps to be able to write down what it is that we know. Firstly, we know that the sample mean is . If we assume that the psychology students have the same standard deviation as the rest of the class, then we can say that the population standard deviation is . We’ll also assume that since Dr Zeppo is grading to a curve, the psychology student grades are normally distributed.

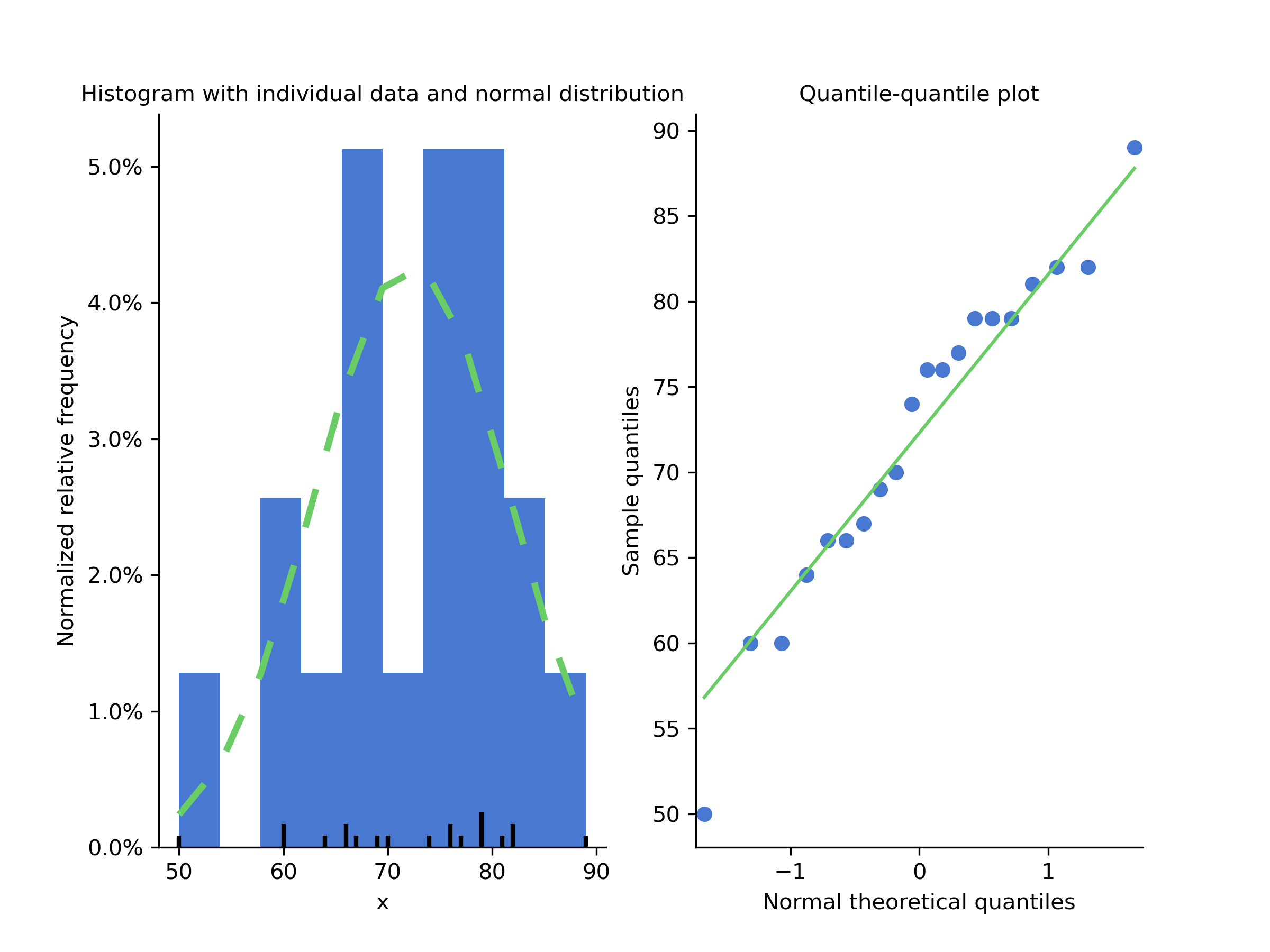

Next, it helps to be clear about what we want to learn from the data. In this case, the research hypothesis relates to the population mean for the psychology student grades, which is unknown. Specifically, we want to know if or not. Given that this is what we know, can we devise a hypothesis test to solve our problem? The data, along with the hypothesised distribution from which they are thought to arise, are shown in Figure 11.3. Not entirely obvious what the right answer is, is it? For this, we are going to need some statistics.

Figure 11.3: The theoretical distribution (dashed green line) from which the psychology student grades (blue bars) are generated in CogStat at the Population properties section of our result set.

The first step in constructing a hypothesis test is to be clear about what the null and alternative hypotheses are. This isn’t too hard to do. Our null hypothesis, , is that the true population mean for psychology student grades is 67.5; and our alternative hypothesis is that the population mean isn’t 67.5. If we write this in mathematical notation, these hypotheses become, though to be honest, this notation doesn’t add much to our understanding of the problem, it’s just a compact way of writing down what we’re trying to learn from the data. The null hypotheses and the alternative hypothesis for our test are both illustrated in Figure 11.4. In addition to providing us with these hypotheses, the scenario outlined above provides us with a fair amount of background knowledge that might be useful. Specifically, there are two special pieces of information that we can add:

- The psychology grades are normally distributed.

- The true standard deviation of these scores is known to be 9.5.

For the moment, we’ll act as if these are absolutely trustworthy facts. In real life, this kind of absolutely trustworthy background knowledge doesn’t exist, and so if we want to rely on these facts we’ll just have make the assumption that these things are true. However, since these assumptions may or may not be warranted, we might need to check them. For now though, we’ll keep things simple.

Figure 11.4: Graphical illustration of the null and alternative hypotheses assumed by the one-sample -test (the two sided version, that is). The null and alternative hypotheses both assume that the population distribution is normal, and additionally assumes that the population standard deviation is known (fixed at some value ). The null hypothesis (left) is that the population mean is equal to some specified value . The alternative hypothesis is that the population mean differs from this value, .

The next step is to figure out what would be a good choice for a diagnostic test statistic, something that would help us discriminate between and . Given that the hypotheses all refer to the population mean , you’d feel confident that the sample mean would be a helpful place to start. We could look at the difference between the sample mean and the value that the null hypothesis predicts for the population mean. In our example, that would mean we calculate . More generally, if we let refer to the value that the null hypothesis claims is our population mean, then we’d want to calculate If this quantity equals or is very close to 0, things are looking good for the null hypothesis. If this quantity is a long way away from 0, then it looks less likely that the null hypothesis is worth retaining. But how far away from zero should it be for us to reject ?

To figure that out, we need to be a bit more sneaky, and we’ll need to rely on those two pieces of background knowledge shared previously, namely that the raw data are normally distributed, and we know the value of the population standard deviation . If the null hypothesis is actually true, and the true mean is , then these facts together mean that we know the complete population distribution of the data: a normal distribution with mean and standard deviation . Adopting the notation from Chapter 7.4.2, a statistician might write this as:

Okay, if that’s true, then what can we say about the distribution of ? Well, as we discussed earlier (see Chapter 8.3.3), the sampling distribution of the mean is also normal, and has mean . But the standard deviation of this sampling distribution , which is called the standard error of the mean, is

In other words, if the null hypothesis is true then the sampling distribution of the mean can be written as follows:

Now comes the trick. What we can do is convert the sample mean into a standard score (Section 5.4). This is conventionally written as , but for let us refer to it as . (The reason for using this expanded notation is to help you remember that we’re calculating standardised version of a sample mean, not a standardised version of a single observation, which is what a -score usually refers to). When we do so, the -score for our sample mean is or, equivalently This -score is our test statistic. The nice thing about using this as our test statistic is that like all -scores, it has a standard normal distribution:

In other words, regardless of what scale the original data are on, the -statistic itself always has the same interpretation: it’s equal to the number of standard errors that separate the observed sample mean from the population mean predicted by the null hypothesis. Better yet, regardless of what the population parameters for the raw scores actually are, the 5% critical regions for -test are always the same, as illustrated in Figures 11.5 and 11.6. And what this meant, way back in the days where people did all their statistics by hand, is that someone could publish a table like this:

| desired level | two-sided test | one-sided test |

|---|---|---|

| .1 | 1.644854 | 1.281552 |

| .05 | 1.959964 | 1.644854 |

| .01 | 2.575829 | 2.326348 |

| .001 | 3.290527 | 3.090232 |

which in turn meant that researchers could calculate their -statistic by hand, and then look up the critical value in a text book. That was an incredibly handy thing to be able to do back then.

Figure 11.5: Rejection regions for the one-sided -test

Figure 11.6: Rejection regions for the two-sided -test

Now, as mentioned earlier, the -test is almost never used in practice. However, the test is so incredibly simple that it’s really easy to do one manually. Let’s go back to the data from Dr Zeppo’s class. However, we’re not going to use CogStat for the rest of this section, because it will automatically (and correctly) use the population parameter estimates, that will actually come in handy in the -test section. So from now on, let’s just follow the logic without the urge to try to replicate it.

- The sample size is (we know this from Figure 11.1).

- The mean is (we know this from Figure 11.2).

- The point estimation for standard deviation is (we have to just believe this for the example’s sake because we said so earlier).

- The null hypothesis is that the mean is .

Next, let’s calculate the (true) standard error of the mean:

And finally, we calculate our -score:

At this point, we would traditionally look up the value 2.26 in our table of critical values. Our original hypothesis was two-sided (we didn’t really have any theory about whether psychology students would be better or worse at statistics than other students), so our hypothesis test is two-sided (or two-tailed) also.

Looking at the little table shown earlier, we can see that 2.26 is bigger than the critical value of 1.96 that would be required to be significant at but smaller than the value of 2.58 that would be required to be significant at a level of . Therefore, we can conclude that we have a significant effect, which we might write up by saying something like this:

With a mean grade of 73.2 in the sample of psychology students, and assuming a true population standard deviation of 9.5, we can conclude that the psychology students have significantly different statistics scores to the class average (, , ).

However, what if want an exact -value? Well, back in the day, the tables of critical values were huge, so you could look up your actual -value and find the smallest value of for which your data would be significant (which, as discussed earlier, is the very definition of a -value). However, we are here just to discuss the -test in order for us to understand the -test, so we will not go into the details of how to do this. However, if you are interested, you can find a table of critical values here. All in all, our -value is .

All statistical tests make assumptions. Some tests make reasonable assumptions, while other tests do not. The one-sample -test makes three basic assumptions:

- Normality. As usually described, the -test assumes that the true population distribution is normal.49 is often pretty reasonable, and not only that, it’s an assumption that we can check if we feel worried about it (see Chapter 11.7).

- Independence. The second assumption of the test is that the observations in your data set are not correlated with each other or related to each other in some funny way. This isn’t as easy to check statistically: it relies a bit on good experimental design. An obvious example of something that violates this assumption is a data set where you “copy” the same observation over and over again in your data file: so you end up with a massive “sample size” consisting of only one genuine observation. More realistically, you have to ask yourself if it’s really plausible to imagine that each observation is an entirely random sample from the population you’re interested in. In practice, this assumption is never met; but we try our best to design studies that minimise the problems of correlated data.

- Known standard deviation. The third assumption of the -test is that the true standard deviation of the population is known to the researcher. This is just stupid. In no real-world data analysis problem do you know the standard deviation of some population but are completely ignorant about the mean . In other words, this assumption is always wrong.

In view of the stupidity of assuming that is known, let’s see if we can live without it. This takes us out of the dreary domain of the -test and into the magical kingdom of the -test, with unicorns, fairies, and leprechauns.

11.2 The one-sample -test

It might not be safe to assume that the psychology student grades necessarily have the same standard deviation as the other students in Dr Zeppo’s class. After all, if we were entertaining the hypothesis that they don’t have the same mean, why should we assume they have the same standard deviation? In view of this, we must stop assuming we know the true value of . However, this violates the assumptions of the -test, which brings us back to square one. However, we still have the raw data loaded into CogStat, and those can give us an estimate of the population standard deviation.

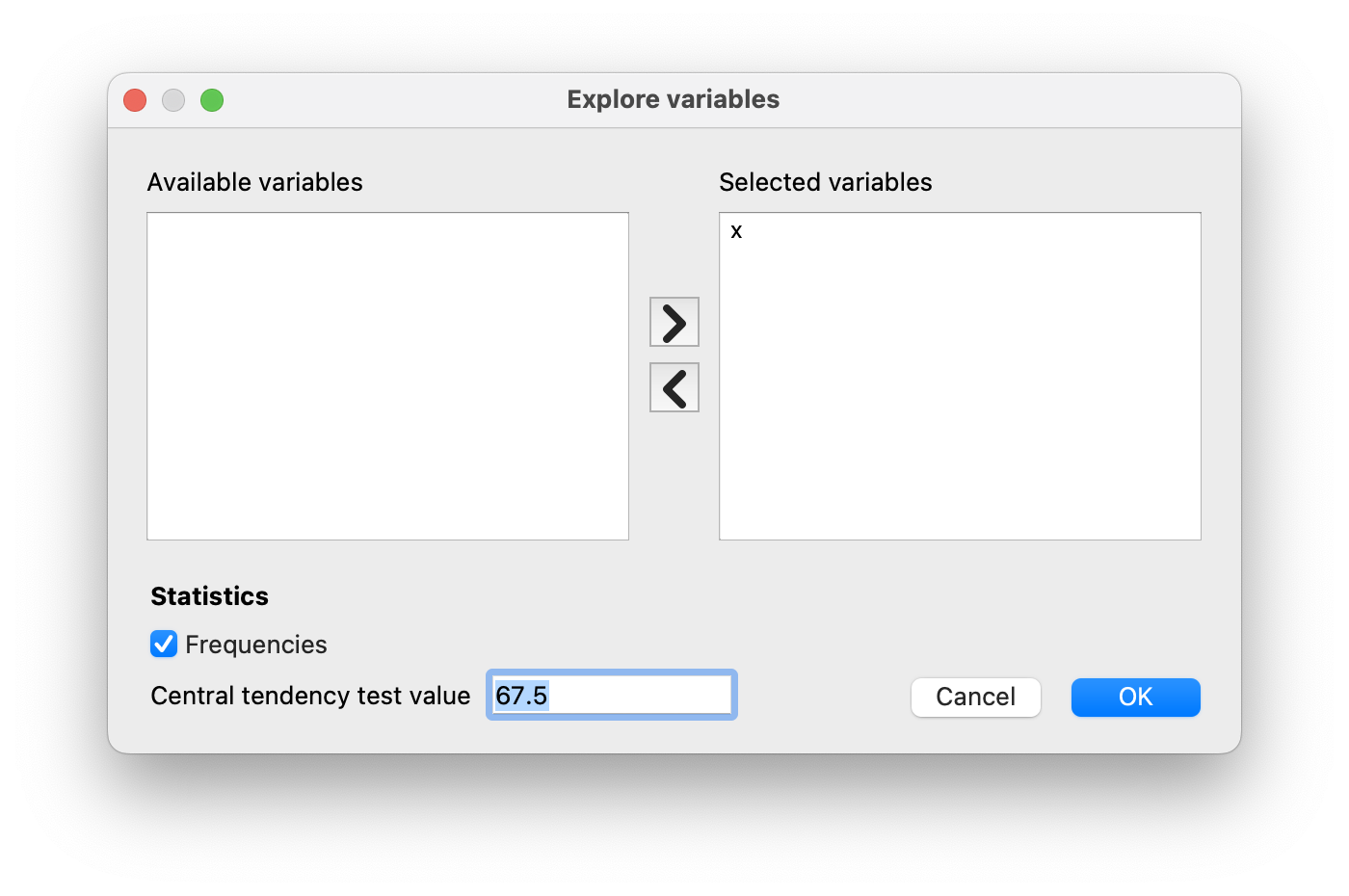

Having loaded the grades data and explored the descriptive statistics, let us take note of a few data points from the Descriptives for the variable and the Population parameter estimations section of the CogStat output. But first, let us re-run the Explore variable option, but now let us tell CogStat to use our null hypothesis of . To do that, we have to fill in 67.5 in our dialogue:

Population parameter estimations

Present confidence interval values for the mean suppose normality.

| Point estimation | 95% confidence interval (low) | 95% confidence interval (high) | |

| Mean | 72.3 | 67.8 | 76.8 |

| Standard deviation | 9.5 | 7.2 | 13.9 |

In other words, while we can’t say that we know that , we can say that . (Actually, it’s but CogStat rounds it to one decimal when displayed, but it uses the exact value for any calculation.)

Because we are now relying on an estimate of the population standard deviation, we need to make some adjustment for the fact that we have some uncertainty about what the true population standard deviation actually is. Maybe our data are just a fluke, maybe the true population standard deviation is 11, for instance. But if that were actually true, and we ran the -test assuming , then the result would end up being non-significant. That’s a problem, and it’s one we’re going to have to address.

Figure 11.7: Graphical illustration of the null and alternative hypotheses assumed by the (two sided) one-sample -test. Note the similarity to the -test. The null hypothesis is that the population mean is equal to some specified value , and the alternative hypothesis is that it is not. Like the -test, we assume that the data are normally distributed; but we do not assume that the population standard deviation is known in advance.

This ambiguity is annoying, and it was resolved in 1908 by a guy called William Sealy Gosset (Student, 1908), who was working as a chemist for the Guinness brewery at the time (see Box, 1987). Because Guinness took a dim view of its employees publishing statistical analysis (apparently, they felt it was a trade secret), he published the work under the pseudonym “A Student”, and to this day, the full name of the -test is actually Student’s -test. The critical thing that Gosset figured out is how we should accommodate the fact that we aren’t entirely sure what the true standard deviation is.50 The answer is that it subtly changes the sampling distribution.

In the -test, our test statistic (now called a -statistic) is calculated in exactly the same way as mentioned above. If our null hypothesis is that the true mean is , but our sample has mean and our estimate of the population standard deviation is , then our statistic is:

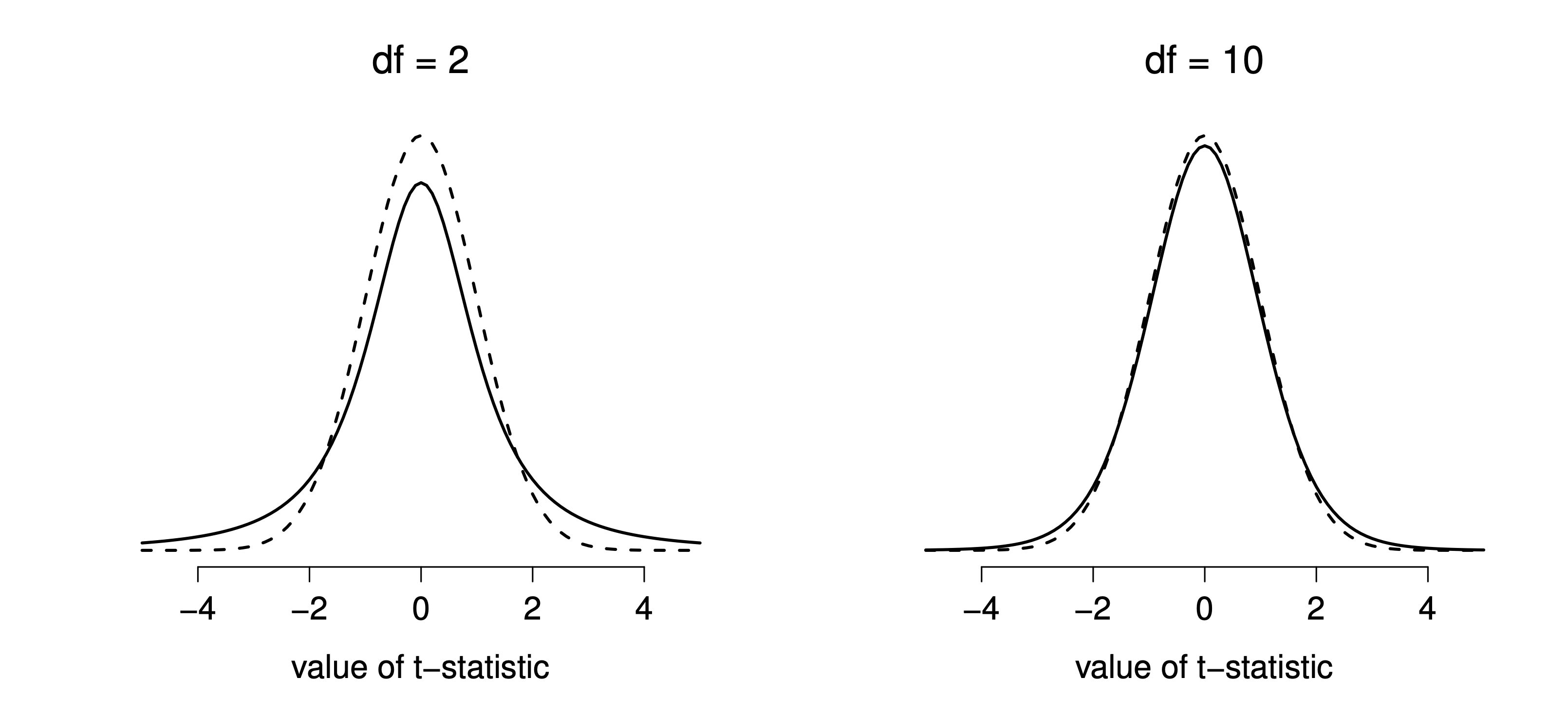

The only thing that has changed in the equation is that instead of using the known true value , we use the estimate . And if this estimate has been constructed from observations, then the sampling distribution turns into a -distribution with degrees of freedom (df). The distribution is very similar to the normal distribution but has “heavier” tails, as discussed earlier in Chapter 7.4.4 and illustrated in Figure 11.8.

Notice, though, that as df gets larger, the -distribution starts to look identical to the standard normal distribution. This is as it should be: if you have a sample size of , then your “estimate” of the standard deviation would be pretty much perfect, right? So, you should expect that for large , the -test would behave the same way as a -test. And that’s exactly what happens!

Figure 11.8: The distribution with 2 degrees of freedom (left) and 10 degrees of freedom (right), with a standard normal distribution (i.e., mean 0 and std dev 1) plotted as dotted lines for comparison purposes. Notice that the distribution has heavier tails (higher kurtosis) than the normal distribution; this effect is quite exaggerated when the degrees of freedom are very small, but negligible for larger values. In other words, for large the distribution is essentially identical to a normal distribution.

As you might expect, the mechanics of the -test are almost identical to the mechanics of the -test. So there’s not much point in going through the tedious exercise of showing you how to do the calculations: it’s pretty much identical to the calculations that we did earlier, except that we use the estimated standard deviation, and then we test our hypothesis using the distribution rather than the normal distribution.

So let’s just do it quickly in CogStat: let’s just go through the output. You can see that the Population parameter estimations stayed the same, so no need to quote them here again. However, let’s see the Hypothesis tests results.

Hypothesis tests

Testing if mean deviates from the value 67.5.

Interval variable. >> Choosing one-sample t-test or Wilcoxon signed-rank test depending on the assumption.

Checking for normality.

Shapiro-Wilk normality test in variable x: W = 0.96, p = .586

Normality is not violated. >> Running one-sample t-test.

Sensitivity power analysis. Minimal effect size to reach 95% power with the present sample size for the present hypothesis test. Minimal effect size in d: 0.85.

One sample t-test against 67.5: t(19) = 2.25, p = .036

Result of the Bayesian one sample t-test: BF10 = 1.80, BF01 = 0.56

Let us go through them line by line.

Testing if mean deviates from the value 67.5.: good, we are made sure we are testing against a null hypothesis of .Interval variable. >> Choosing one-sample t-test or Wilcoxon signed-rank test depending on the assumption.: this means that depending on normality (which is an assumption of both and -tests), CogStat will choose either the -test or the Wilcoxon signed-rank test. We will discuss the Wilcoxon signed-rank test later on.Normality is not violated. >> Running one-sample t-test.: this is good, because we know that the -test is only valid if the data are normally distributed.one-sample t-test against 67.5: t(19) = 2.25, p < .036: this is the result of the -test. The -statistic is 2.25, and the -value is 0.036. This means that we can reject the null hypothesis that at the 5% level of significance. In other words, we can conclude that the mean of the sample is significantly different from 67.5.

Don’t forget to take note of the Mean’s 95% confidence interval values from the Population parameter estimations, and now we could report the result by saying something like this:

With a mean grade of 72.3, the psychology students scored slightly higher than the average grade of 67.5 (, ); the 95% confidence interval is [67.8, 76.8].

is shorthand notation for a -statistic that has 19 degrees of freedom. That said, it’s often the case that people don’t report the confidence interval, or do so using a much more compressed form. For instance, it’s not uncommon to see the confidence interval included as part of the stat block, like this:

, , CI

With that much jargon crammed into half a line, you know it must be really smart.

Okay, so what assumptions does the one-sample -test make? Well, since the -test is a -test with the premise of known standard deviation removed, you shouldn’t be surprised to see that it makes the same assumptions as the -test, minus the one about the known standard deviation. That is

- Normality. We’re still assuming that the population distribution is normal.

- Independence. Once again, we have to assume that the observations in our sample are generated independently of one another.

Overall, these two assumptions aren’t terribly unreasonable, and as a consequence, the one-sample -test is pretty widely used in practice as a way of comparing a sample mean against a hypothesised population mean.

11.3 The independent samples -test (Student test)

Although the one-sample -test has its uses, it’s not the most typical example of a -test. A much more common situation arises when you’ve got two different groups of observations. In psychology, this tends to correspond to two different groups of participants, where each group corresponds to a different condition in your study. For each person in the study, you measure some outcome variable of interest, and the research question that you’re asking is whether or not the two groups have the same population mean. This is the situation that the independent samples -test is designed for.

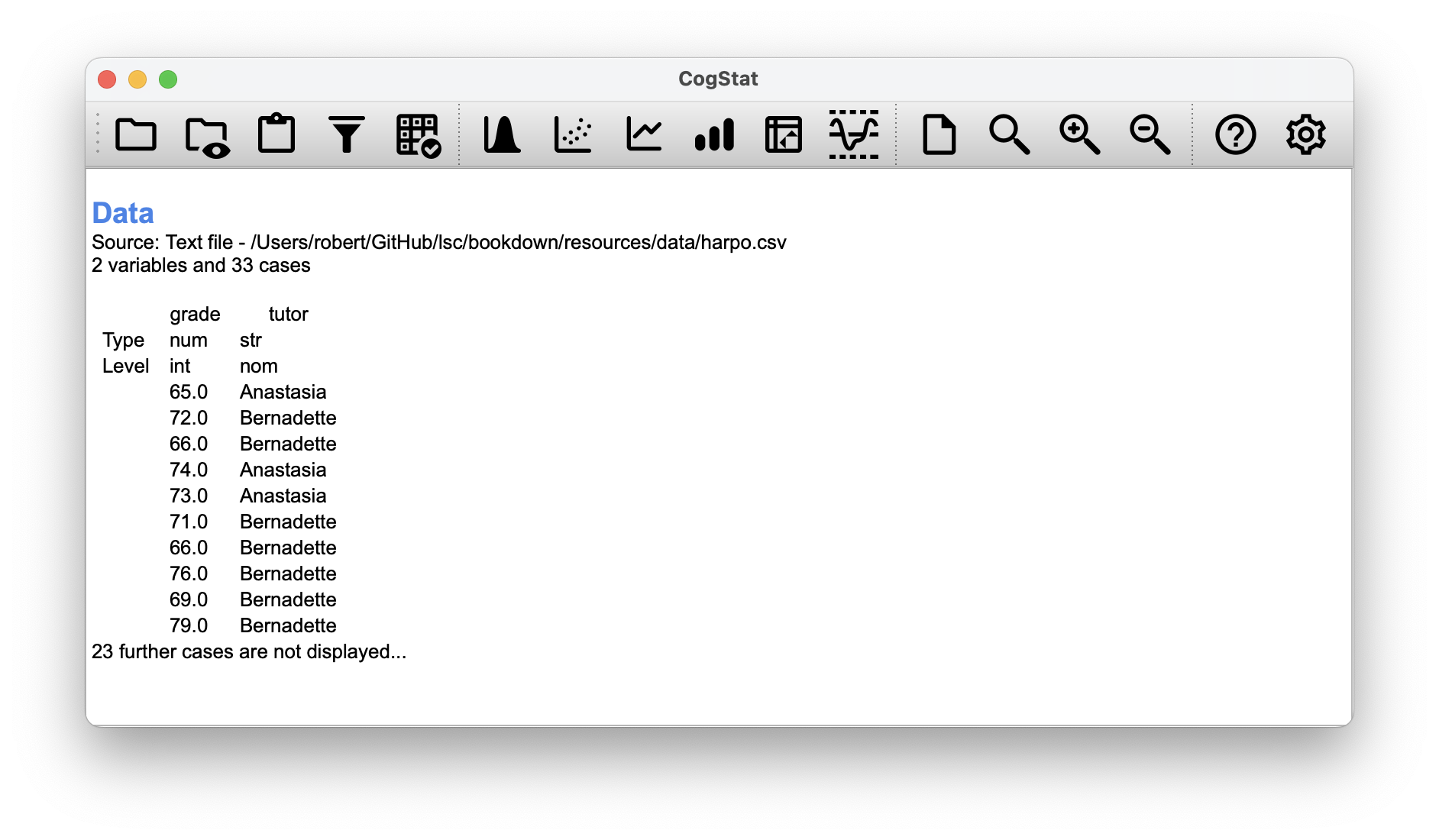

Suppose we have 33 students taking Dr Harpo’s statistics lectures, and Dr Harpo doesn’t grade to a curve. Actually, Dr Harpo’s grading is a bit of a mystery, so we don’t really know anything about what the average grade is for the class as a whole. There are two tutors for the class, Anastasia and Bernadette. There are students in Anastasia’s tutorials, and in Bernadette’s tutorials. The research question we’re interested in is whether Anastasia or Bernadette is a better tutor, or if it doesn’t make much of a difference.

As usual, let’s load the file harpo.csv.

As we can see, we have two variables: grade and tutor. The grade variable is a numeric interval variable, containing the grades for all students taking Dr Harpo’s class; the tutor variable is a categorical (nominal) data that indicates who each student’s tutor was.

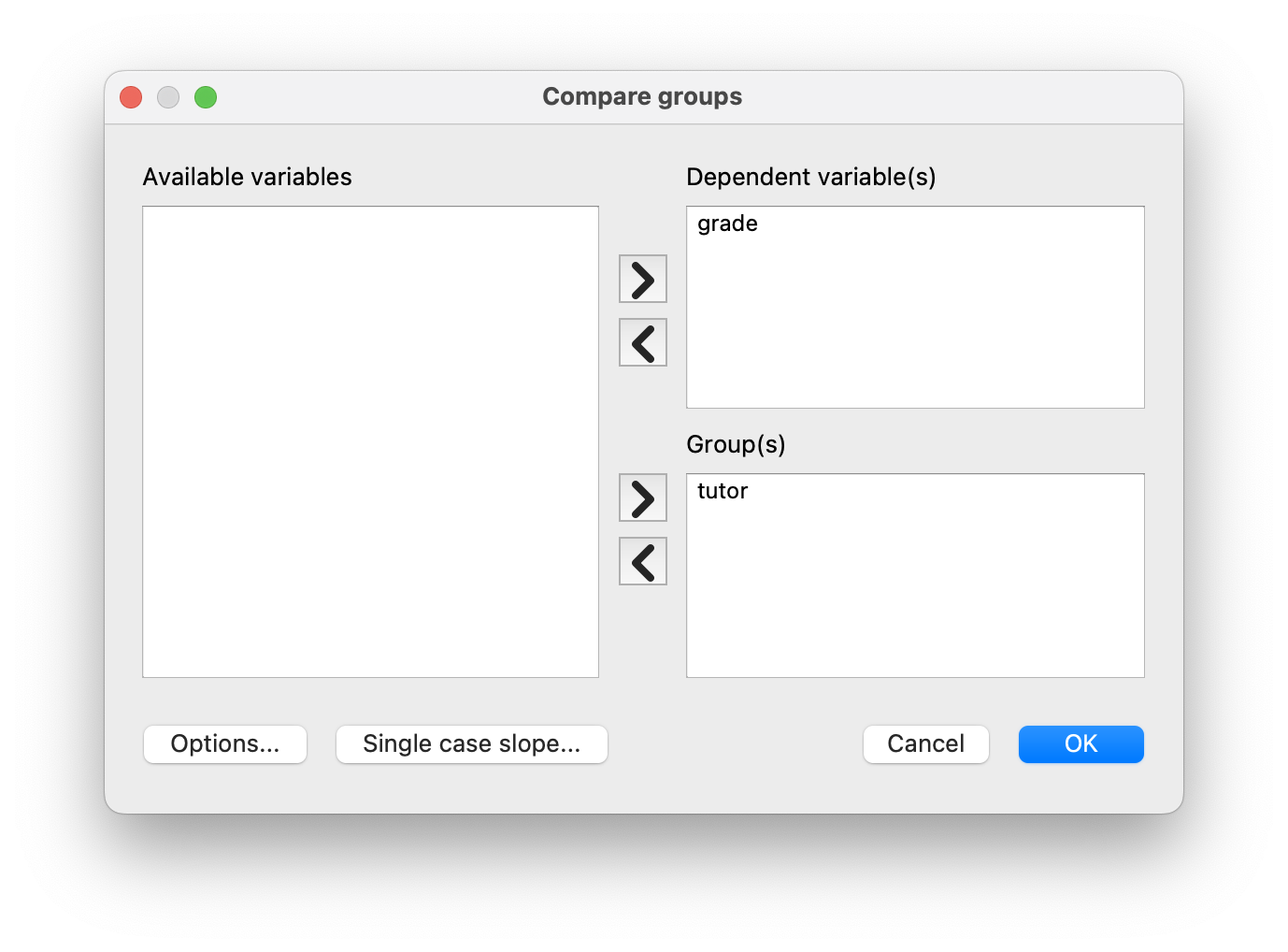

Let’s run the Compare groups function so we can get some information about the grades by tutor.

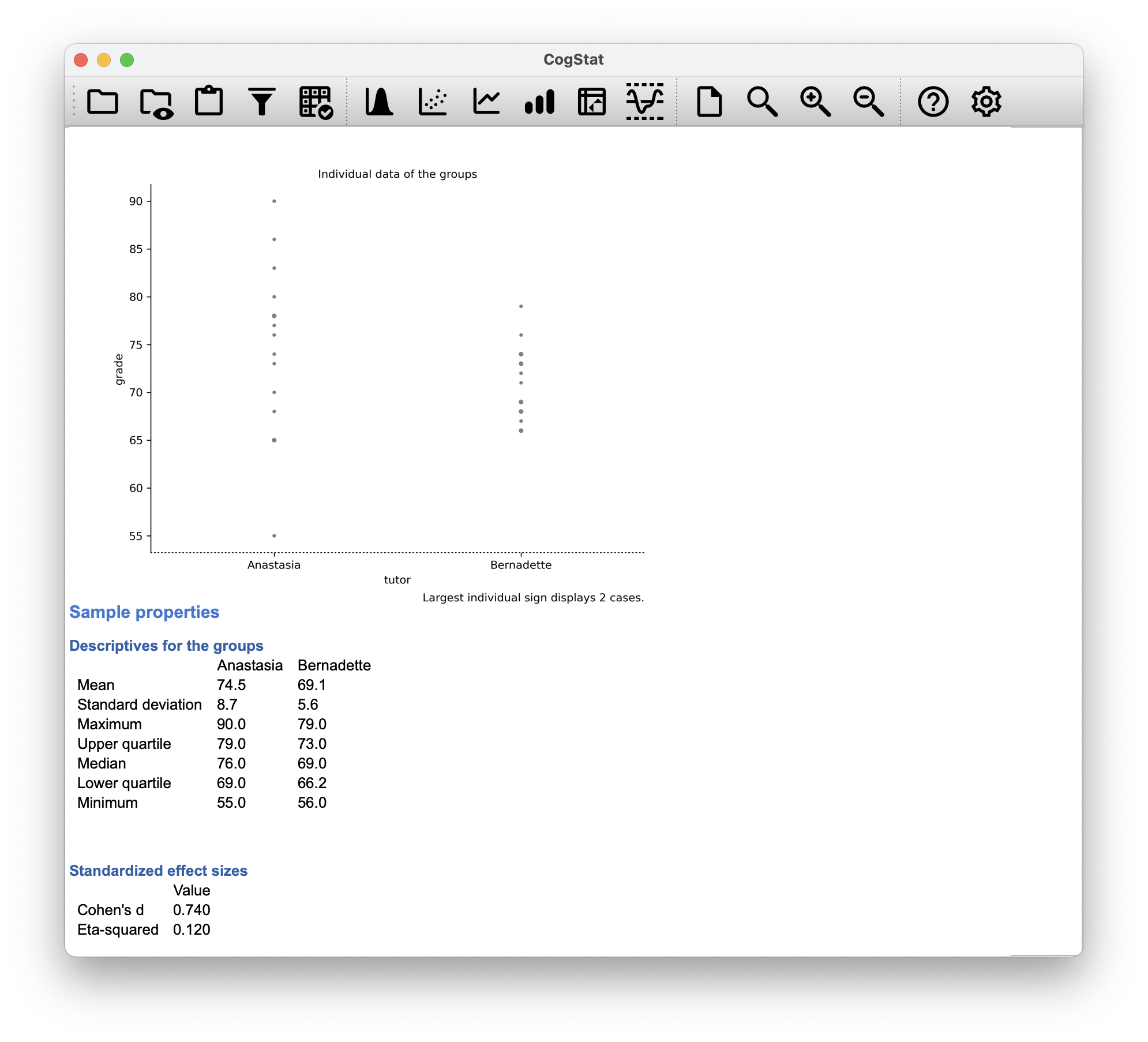

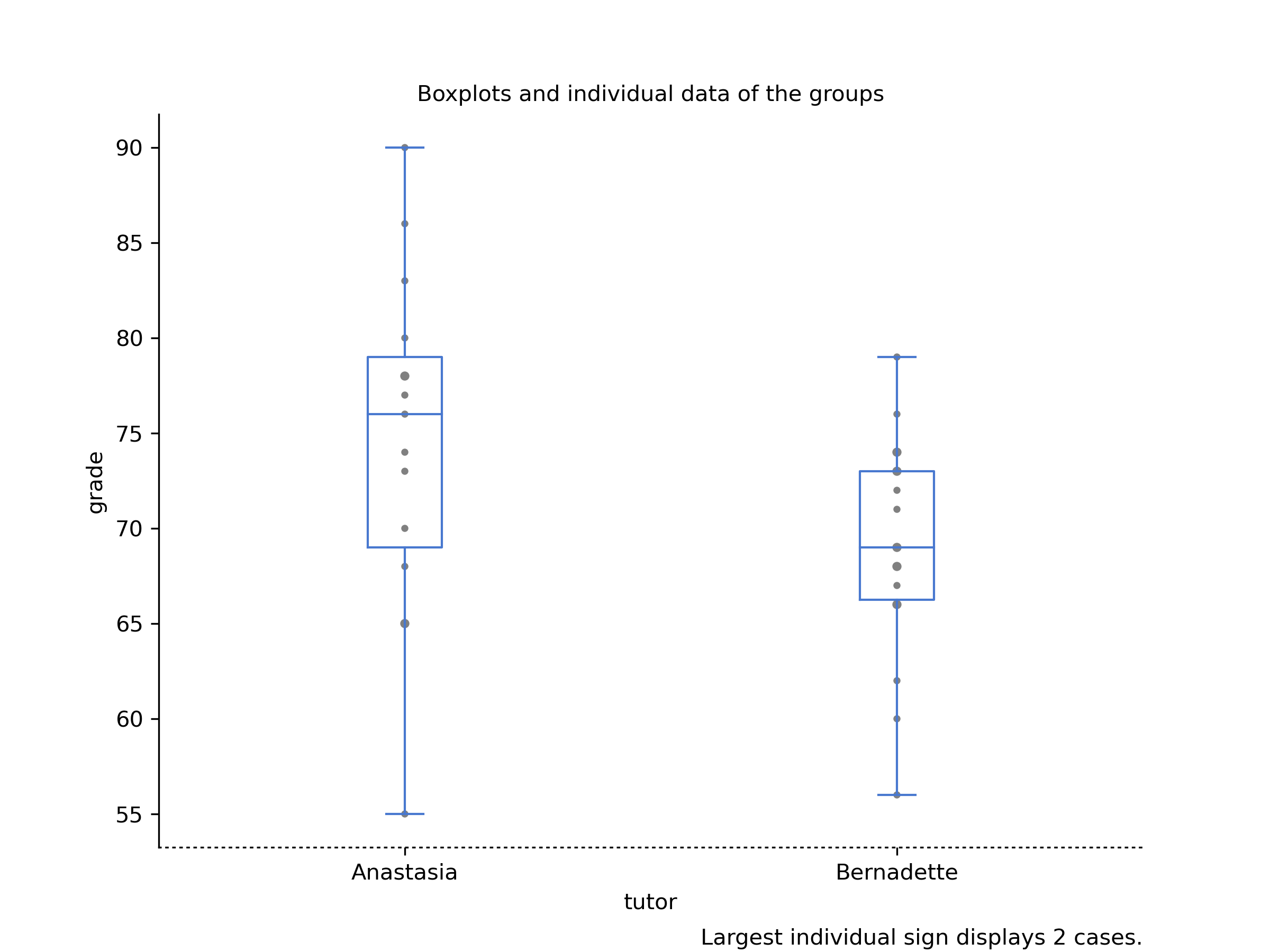

Figure 11.9: Comparing grades by tutor in CogStat for our harpo data set.

In the boxplot generated by CogStat, we have the grades, the means and corresponding upper and lower quartiles. We can see that the grades for Anastasia’s students are slightly higher than those for Bernadette’s students. We can also see that the variance of the grades for Bernadette’s students is slightly higher than that for Anastasia’s students.

The independent samples -test comes in two different forms, Student’s and Welch’s. The original Student -test – which is the one we’ll describe in this section – is the simpler of the two but relies on much more restrictive assumptions than the Welch -test. Assuming for the moment that you want to run a two-sided test, the goal is to determine whether two “independent samples” of data are drawn from populations with the same mean (the null hypothesis) or different means (the alternative hypothesis).

When we say “independent” samples, what we really mean here is that there’s no special relationship between observations in the two samples. This probably doesn’t make a lot of sense right now, but it will be clearer when we come to talk about the paired samples -test later on. For now, let’s just point out that if we have an experimental design where participants are randomly allocated to one of two groups, and we want to compare the two groups’ mean performance on some outcome measure, then an independent samples -test (rather than a paired samples -test) is what we’re after.

Okay, so let’s let denote the true population mean for group 1 (e.g., Anastasia’s students), and will be the true population mean for group 2 (e.g., Bernadette’s students),51 and as usual, we’ll let and denote the observed sample means for both of these groups. Our null hypothesis states that the two population means are identical (), and the alternative to this is that they are not ().

Figure 11.10: Graphical illustration of the null and alternative hypotheses assumed by the Student -test. The null hypothesis assumes that both groups have the same mean , whereas the alternative assumes that they have different means and . Notice that it is assumed that the population distributions are normal, and that, although the alternative hypothesis allows the group to have different means, it assumes they have the same standard deviation

To construct a hypothesis test that handles this scenario, we start by noting that if the null hypothesis is true, then the difference between the population means is exactly zero,

As a consequence, a diagnostic test statistic will be based on the difference between the two sample means. Because if the null hypothesis is true, then we’d expect to be pretty close to zero. However, just like we saw with our one-sample tests (i.e. the one-sample -test and the one-sample -test) we have to be precise about exactly how close to zero this difference should be. And the solution to the problem is more or less the same one: we calculate a standard error estimate (SE), just like last time, and then divide the difference between means by this estimate. So our -statistic will be of the form We just need to figure out what this standard error estimate actually is. This is a bit trickier than was the case for either of the two tests we’ve looked at so far, so we need to go through it a lot more carefully to understand how it works.

In the original “Student -test”, we make the assumption that the two groups have the same population standard deviation: that is, regardless of whether the population means are the same, we assume that the population standard deviations are identical, . Since we’re assuming that the two standard deviations are the same, we drop the subscripts and refer to both of them as .

How should we estimate this? How should we construct a single estimate of a standard deviation when we have two samples? The answer is, we take a weighed average of the variance estimates, which we use as our pooled estimate of the variance. The weight assigned to each sample is equal to the number of observations in that sample, minus 1.

Now that we’ve assigned weights to each sample, we calculate the pooled estimate of the variance by taking the weighted average of the two variance estimates, and .

Finally, we convert the pooled variance estimate to a pooled standard deviation estimate, by taking the square root. This gives us the following formula for ,

And if you mentally substitute and into this equation, you get a very ugly formula that actually seems to be the “standard” way of describing the pooled standard deviation estimate.

But let us describe it a bit differently:

Our data set actually corresponds to a set of observations, which are sorted into two groups. So let’s use the notation to refer to the grade received by the -th student in the -th tutorial group: that is, is the grade received by the first student in Anastasia’s class, is her second student, and so on. And we have two separate group means and , which we could “generically” refer to using the notation , i.e.,the mean grade for the -th tutorial group. Since every single student falls into one of the two tutorials, and so we can describe their deviation from the group mean as the difference

So why not just use these deviations (i.e. the extent to which each student’s grade differs from the mean grade in their tutorial)? Remember, a variance is just the average of a bunch of squared deviations.

where the notation “” is a lazy way of saying “calculate a sum by looking at all students in all tutorials”, since each “” corresponds to one student. But, as we saw in Chapter 8, calculating the variance by dividing by produces a biased estimate of the population variance. And previously, we needed to divide by to fix this. However, the reason why this bias exists is because the variance estimate relies on the sample mean; and to the extent that the sample mean isn’t equal to the population mean, it can systematically bias our estimate of the variance. But this time we’re relying on two sample means! Does this mean that we’ve got more bias? Yes, yes it does. And does this mean we now need to divide by instead of , in order to calculate our pooled variance estimate? Why, yes… Oh, and if you take the square root of this then you get , the pooled standard deviation estimate. In other words, the pooled standard deviation calculation is nothing special: it’s not terribly different to the regular standard deviation calculation.

Regardless of which way you want to think about it, we now have our pooled estimate of the standard deviation. From now on, we’ll refer to this estimate as . Let’s now go back to thinking about the hypothesis test.

Our whole reason for calculating this pooled estimate was that we knew it would be helpful when calculating our standard error estimate. But, standard error of what? In the one-sample -test, it was the standard error of the sample mean, , and since that’s what the denominator of our -statistic looked like. This time around, however, we have two sample means. And what we’re interested in, specifically, is the the difference between the two . Consequently, the standard error that we need to divide by is in fact the standard error of the difference between means. As long as the two variables really do have the same standard deviation, then our estimate for the standard error is and our -statistic is therefore

Just as we saw with our one-sample test, the sampling distribution of this -statistic is a -distribution as long as the null hypothesis is true, and all of the assumptions of the test are met. The degrees of freedom, however, is slightly different. As usual, we can think of the degrees of freedom to be equal to the number of data points minus the number of constraints. In this case, we have observations ( in sample 1, and in sample 2), and 2 constraints (the sample means). So the total degrees of freedom for this test are .

The output from CogStat has a very familiar form. Let’s start to talk about the confidence interval, and for that, let’s circle back to our Population parameter estimations:

Population parameter estimations

Means

Present confidence interval values suppose normality.

| tutor | Point estimation | 95% CI (low) | 95% CI (high) |

| Anastasia | 74.5 | 69.5 | 79.5 |

| Bernadette | 69.1 | 66.2 | 71.9 |

| Difference between the two groups: | 5.5 | 0.2 | 10.8 |

It’s pretty important to be clear on what this confidence interval actually refers to: it is a confidence interval for the difference between the group means. In our example, Anastasia’s students had an average grade of 74.5, and Bernadette’s students had an average grade of 69.1, so the difference between the two sample means is 5.5 (due to rounding). But of course the difference between population means might be bigger or smaller than this. The confidence interval reported tells you that there’s a 95% chance that the true difference between means lies between 0.2 and 10.8.

In any case, the difference between the two groups is significant (just barely), so we might write up the result using text like this:

The mean grade in Anastasia’s class was 74.5 (SD = 8.7), whereas the mean in Bernadette’s class was 69.1 (SD = 5.6). A Student’s independent samples -test showed that this 5.5 difference was significant (, , ), suggesting that a genuine difference in learning outcomes has occurred.

At a bare minimum, you’d expect to see the -statistic, the degrees of freedom and the value. So you should include something like this at a minimum: , . If statisticians had their way, everyone would also report the confidence interval and probably the effect size measure too, because they are useful things to know. But real life doesn’t always work the way statisticians want it to: you should make a judgment based on whether you think it will help your readers, and (if you’re writing a scientific paper) the editorial standard for the journal in question. Some journals expect you to report effect sizes, others don’t. Within some scientific communities it is standard practice to report confidence intervals, in other it is not. You’ll need to figure out what your audience expects.

Before moving on to talk about the assumptions of the -test, there’s one additional point to make about the use of -tests in practice. The first one relates to the sign of the -statistic (that is, whether it is a positive number or a negative one). One very common worry that students have when they start running their first -test is that they often end up with negative values for the -statistic, and don’t know how to interpret it. On closer inspection, the students will notice that the confidence intervals also have the opposite signs. This is perfectly okay. The -statistic calculated in CogStat is always of the form If “mean 1” is larger than “mean 2” the statistic will be positive, whereas if “mean 2” is larger then the statistic will be negative. Similarly, the confidence interval is the confidence interval for the difference “(mean 1) minus (mean 2)”, which will be the reverse of what you’d get if you were calculating the confidence interval for the difference “(mean 2) minus (mean 1)”.

Okay, that’s pretty straightforward when you think about it, but now consider our -test comparing Anastasia’s class to Bernadette’s class. Which one does CogStat call “mean 1” and which one should we call “mean 2”? Let’s look at the order of how the data is presented. Anastasia goes first in all the result outputs. So, we would write

Anastasia’s class had higher grades than Bernadette’s class ().

On the other hand, suppose the phrasing we wanted to use has Bernadette’s class listed first. If so, it makes more sense to treat her class as group 1, and if so, the write up looks like this:

Bernadette’s class had lower grades than Anastasia’s class ().

Because we’re talking about one group having “lower” scores this time around, it is more sensible to use the negative form of the -statistic. It just makes it read more cleanly.

One last thing: please note that you can’t do this for other types of test statistics. It works for -tests, but it wouldn’t be meaningful for chi-square tests, -tests. So don’t overgeneralise this advice!

As always, our hypothesis test relies on some assumptions. For the Student t-test there are three assumptions, some of which we saw previously in the context of the one-sample -test:

- Normality. Specifically, we assume that both groups are normally distributed.

- Independence. In the context of the Student test, this has two aspects to it. Firstly, we assume that the observations within each sample are independent of one another (exactly the same as for the one-sample test). However, we also assume that there are no cross-sample dependencies. If, for instance, it turns out that you included some participants in both experimental conditions of your study (e.g. by accidentally allowing the same person to sign up to different conditions), then there are some cross sample dependencies that you’d need to take into account.

- Homogeneity of variance (also called “homoscedasticity”). The third assumption is that the population standard deviation is the same in both groups. CogStat tests this assumption using the Levene test automatically, as you’ve seen in the result output. But we’ll talk about the test later on in the book (Section 12.6).

11.4 The independent samples -test (Welch test)

The biggest problem with using the Student test in practice is the third assumption in the previous section: it assumes that both groups have the same standard deviation (i.e. homogeneity of variance). This is rarely true in real life: if two samples don’t have the same means, why should we expect them to have the same standard deviation? There’s no reason to expect this assumption to be true.

There is a different form of the -test (Welch, 1947) that does not rely on this assumption. A graphical illustration of what the Welch test assumes about the data is shown in Figure 11.11, to provide a contrast with the Student test version in Figure 11.10. The Welch test is very similar to the Student test. For example, the -statistic that we use in the Welch test is calculated in much the same way as for the Student test. That is, we take the difference between the sample means and then divide it by some estimate of the standard error of that difference:

The main difference is that the standard error calculations are different. If the two populations have different standard deviations, then it’s utter nonsense to calculate a pooled standard deviation estimate because you’re averaging apples and oranges. But you can still estimate the standard error of the difference between sample means; it just ends up looking different:

The second difference between Welch and Student is how the degrees of freedom are calculated. In the Welch test, the “degrees of freedom” doesn’t have to be a whole number, and it doesn’t correspond all that closely to the “number of data points minus the number of constraints” heuristic used to this point. The degrees of freedom are, in fact:

What matters is that you’ll see that the “df” value that pops out of a Welch test tends to be a little bit smaller than the one used for the Student test, and it doesn’t have to be a whole number.

Figure 11.11: Graphical illustration of the null and alternative hypotheses assumed by the Welch -test. Like the Student test, we assume that both samples are drawn from a normal population. However, the alternative hypothesis no longer requires the two populations to have equal variance.

In most statistical software, you can choose to use the Welch test instead of the Student test. In CogStat, other parameters will decide whether the Welch test or the Student test is run. If you have two samples with different standard deviations, the Welch test will be run automatically. If you have two samples with the same standard deviation, the Student test will be run automatically.

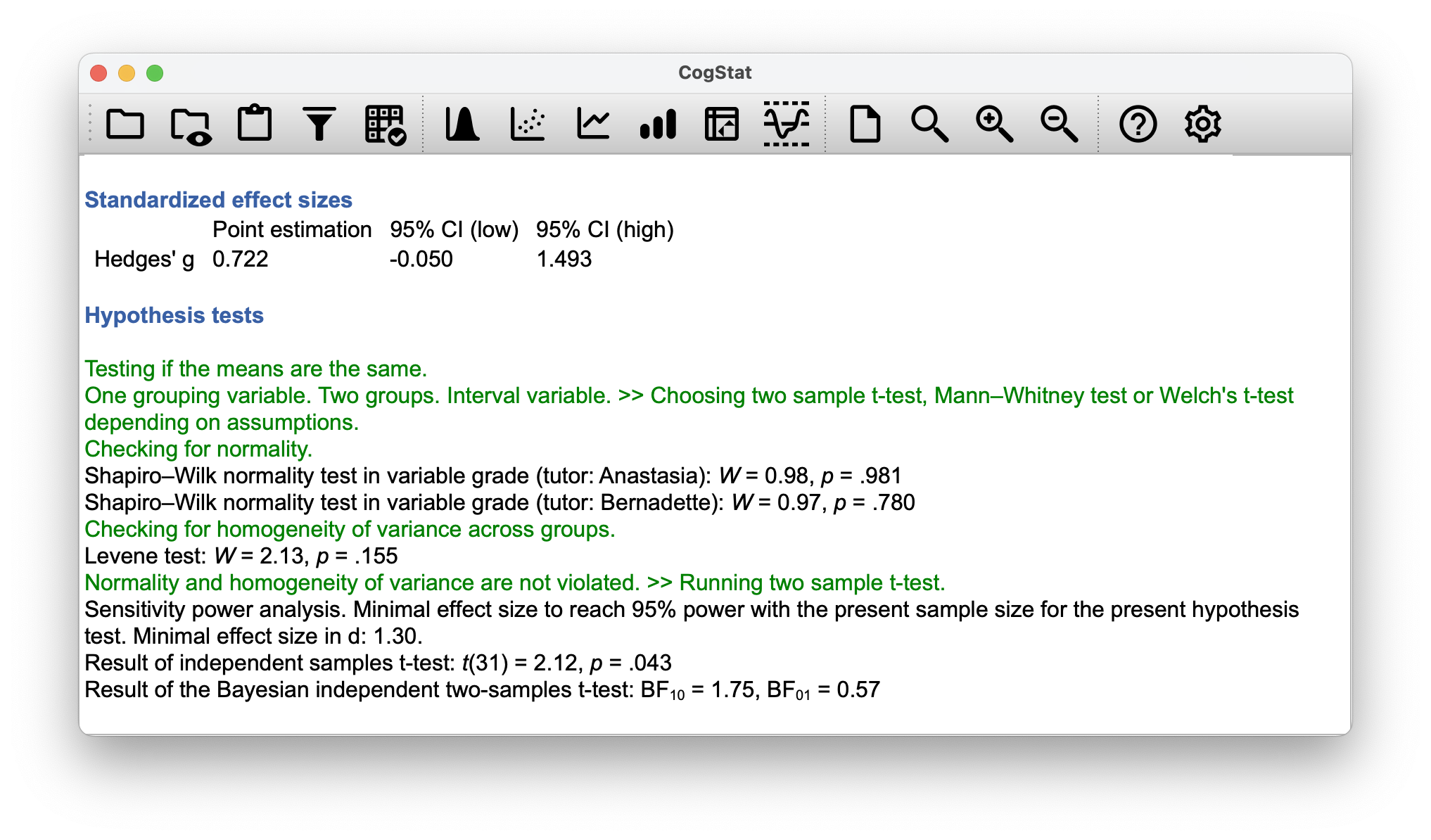

As we mentioned earlier, a Levene test can be used to probe for homogeneity of variance, and CogStat does just that! We generously skipped over calling this out in the previous chapter when discussing the results, but here’s the time to look at those specific lines in the result set.

Checking for homogeneity of variance across groups.

Levene test: = 2.13, = .155

If the Levene test is significant (i.e. ), the Welch test will be run. If the Levene test is not significant (i.e. ), the Student test will be run. In this case, the Levene test was not significant, so the Student test was run.

Should we run a Welch test on the same data, it would not be significant (, ). What does this mean? Should we panic? Is the sky burning? Probably not. The fact that one test is significant and the other isn’t doesn’t itself mean very much, especially since I kind of rigged the data so that this would happen. As a general rule, it’s not a good idea to go out of your way to try to interpret or explain the difference between a -value of .049 and a -value of .051. If this sort of thing happens in real life, the difference in these -values is almost certainly due to chance. What does matter is that you take a little bit of care in thinking about what test you use. The Student test and the Welch test have different strengths and weaknesses. If the two populations really do have equal variances, then the Student test is slightly more powerful (lower Type II error rate) than the Welch test. However, if they don’t have the same variances, then the assumptions of the Student test are violated and you may not be able to trust it: you might end up with a higher Type I error rate. So it’s a trade-off.

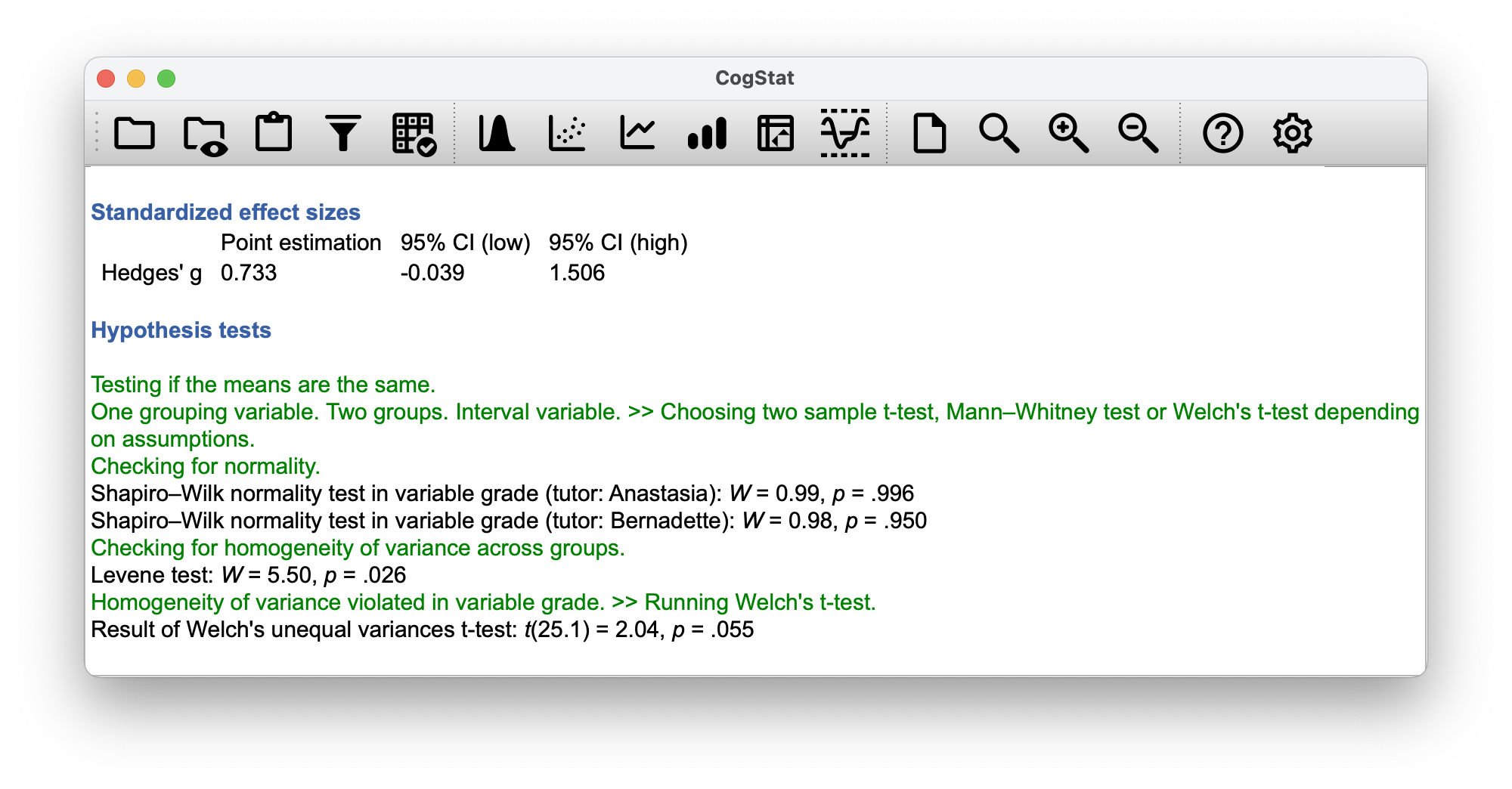

However, let us explore what CogStat would display in case the normality was not violated but the homogeneity of variance was! We’ve prepared a tweaked version of the data set, where the variances are different, but the normality is not violated: harpo_welch.csv.

Figure 11.12: CogStat result set when the Levene test is significant, hence a Welch test must be run.

Note how the degree of freedom is now a decimal and not an integer. The data set however was just tweaked slightly, and not enough for the value to be low enough, so the Welch test is not significant. However, we just wanted to demonstrate that the Welch test is run in this case.

The assumptions of the Welch test are very similar to those made by the Student -test, except that the Welch test does not assume homogeneity of variance. This leaves only the assumption of normality, and the assumption of independence. The specifics of these assumptions are the same for the Welch test as for the Student test.

11.5 The paired-samples -test

Regardless of whether we’re talking about the Student test or the Welch test, an independent samples -test is intended to be used in a situation where you have two samples that are, well, independent of one another. This situation arises naturally when participants are assigned randomly to one of two experimental conditions, but it provides a very poor approximation to other sorts of research designs. In particular, a repeated measures design – in which each participant is measured (with respect to the same outcome variable) in both experimental conditions – is not suited for analysis using independent samples -tests.

For example, we might be interested in whether listening to music reduces people’s working memory capacity. To that end, we could measure each person’s working memory capacity in two conditions: with music, and without music. In an experimental design such as this one,52 each participant appears in both groups. This requires us to approach the problem in a different way; by using the paired samples -test.

We’ll use the data set this time from Dr Chico’s class. In her class, students take two very challenging tests, one early in the semester and one later. Her theory is that the first test is a bit of a “wake-up call” for students: when they realise how hard her class really is, they’ll work harder for the second test and get a better mark. Is she right? To test this, let’s load the chico.csv file into CogStat.

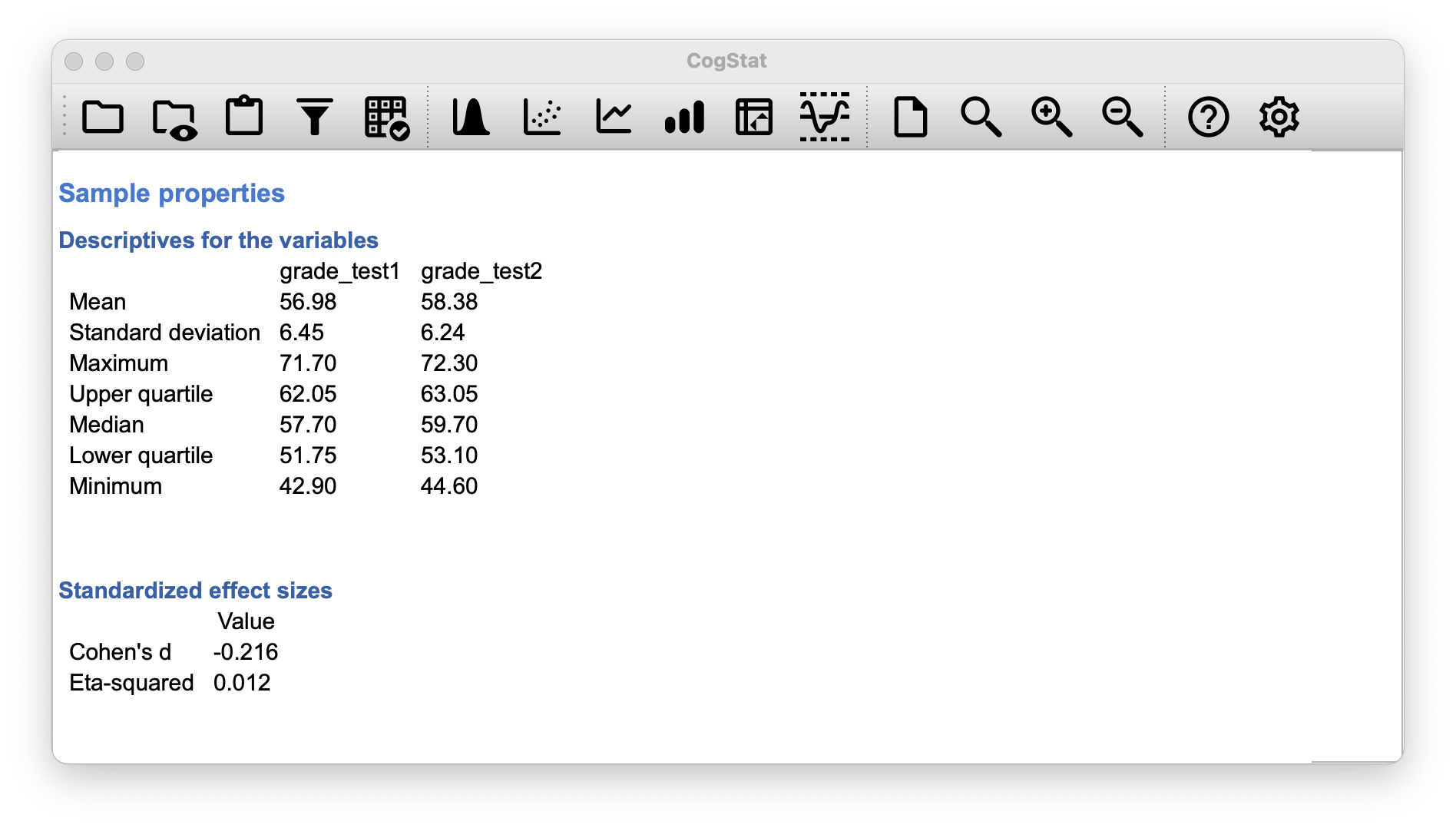

The data frame chico contains three variables: an id nominal variable that identifies each student in the class, the grade_test1 variable that records the student grade for the first test, and the grade_test2 variable that has the grades for the second test. Let’s take a quick look at the descriptive statistics. Since we are running a before and after test (like we already did in Chapter 10.7), let’s use the Compare repeated measures variables function.

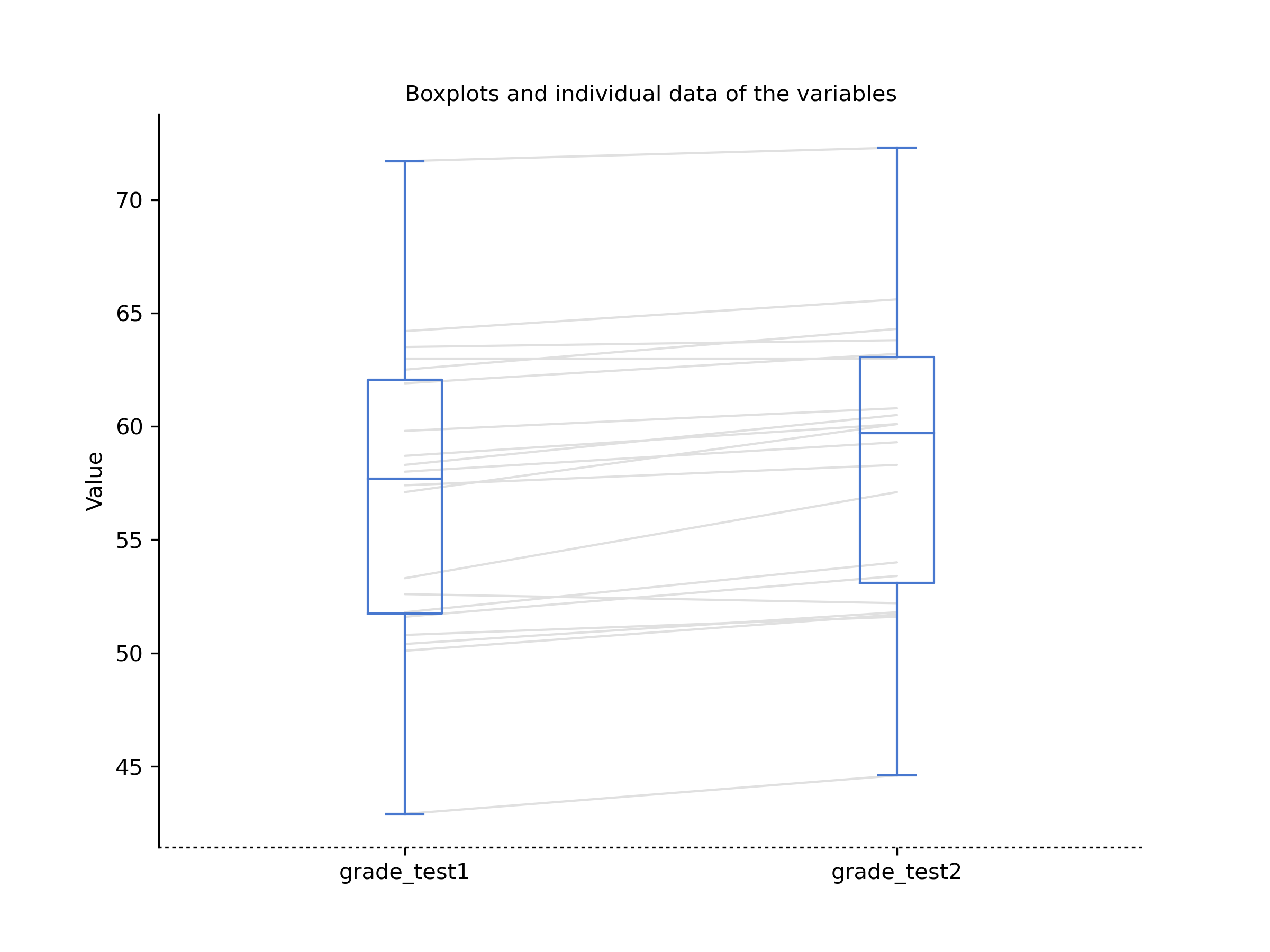

Figure 11.13: CogStat descriptives and boxplot for the variables grade_test1 and grade_test2 in the Compare repeated measures variables function.

At a glance, it does seem like the class is a hard one (most grades are between 50 and 60), but it does look like there’s an improvement from the first test to the second one. We see that this impression seems to be supported. Across all 20 students, the mean grade for the first test is 56.98, but this rises to 58.38 for the second test. Although, given that the standard deviations are 6.45 and 6.24 respectively, it’s starting to feel like maybe the improvement is just illusory; maybe just random variation. This impression is reinforced when you look at the means (i.e. horizontal blue line in the rectangle) and confidence intervals (i.e. the blue rectangles themselves) plotted in the boxplot. Looking at how wide those confidence intervals are, we’d be tempted to think that the apparent improvement in student performance is pure chance.

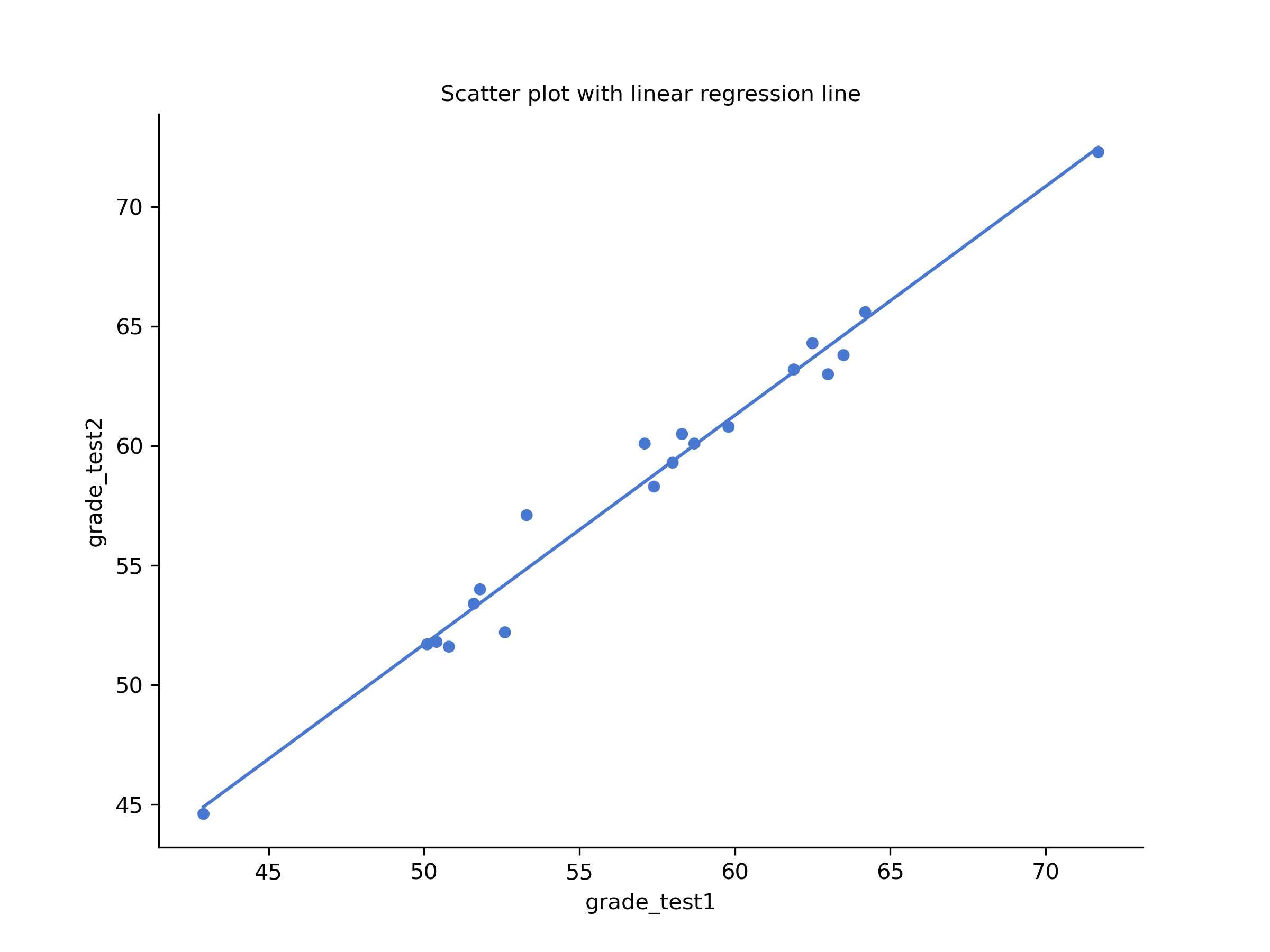

Nevertheless, this impression is wrong. To see why, take a look at the scatterplot of the grades for test 1 against the grades for test 2. Let us use CogStat’s Explore relation of variable pair function, so we see a scatter plot with a linear regression line (shown in Figure 11.14).

Figure 11.14: Scatterplot showing the individual grades for test 1 and test 2

In this plot, each dot corresponds to the two grades for a given student: if their grade for test 1 ( co-ordinate) equals their grade for test 2 ( co-ordinate), then the dot falls on the line. Points falling above the line are the students that performed better on the second test.

If we were to try to do an independent samples -test, we would be conflating the within subject differences (which is what we’re interested in testing) with the between subject variability (which we are not).

The solution to the problem, mathematically speaking, is a one-sample -test on the within-subject difference variable. To formalise this slightly, if is the score that the -th participant obtained on the first variable, and is the score that the same person obtained on the second one, then the difference score is:

Notice that the difference scores is variable 1 minus variable 2 and not the other way around, so if we want improvement to correspond to a positive valued difference, we actually want “test 2” to be our “variable 1”. Equally, we would say that is the population mean for this difference variable. So, to convert this to a hypothesis test, our null hypothesis is that this mean difference is zero; the alternative hypothesis is that it is not: (this is assuming we’re talking about a two-sided test here). This is more or less identical to the way we described the hypotheses for the one-sample -test: the only difference is that the specific value that the null hypothesis predicts is 0. And so our -statistic is defined in more or less the same way too. If we let denote the mean of the difference scores, then which is where is the standard deviation of the difference scores. Since this is just an ordinary, one-sample -test, with nothing special about it, the degrees of freedom are still . And that’s it: the paired samples -test really isn’t a new test at all: it’s a one-sample -test, but applied to the difference between two variables. It’s actually very simple; the only reason it merits a discussion as long as this one, is that you need to be able to recognise when a paired samples test is appropriate, and to understand why it’s better than an independent samples test.

Looking at the CogStat result set, the software automatically decided to run a paired -test when we used the Compare repeated measures variables function:

Population parameter estimations

Present confidence interval values suppose normality.

| Point estimation | 95% CI (low) | 95% CI (high) | |

| grade_test1 | 56.98 | 53.88 | 60.08 |

| grade_test2 | 58.38 | 55.39 | 61.38 |

Hypothesis tests

Testing if the means are the same.

Two variables. Interval variables. >> Choosing paired t-test or paried Wilcoxon test depending on the assumptions.

Checking for normality.

Shapiro-Wilk normality test in variable Difference of grade_test1 and grade_test2: W = 0.97, p = .678

Normality is not violated. >> Running paired t-test.

Sensitivity power analysis. Minimal effect size to reach 95% power with the present sample size for the present hypothesis test. Minimal effect size in d: 0.85.

Result of paired samples t-test: t(19) = -6.48, p < .001

Result of the Bayesian two-samples t-test: BF10 = 5991.58, BF01 = 0.00

From the population paramenter estimations, we know there’s an average improvement of 1.4 from test 1 to test 2 (), and this is significantly different from 0 ().

11.6 Effect size (Cohen’s )

The most commonly used measure of effect size for a -test is Cohen’s (Cohen, 1988). Cohen himself defined it primarily in the context of an independent samples -test, specifically the Student test. In that context, a natural way of defining the effect size is to divide the difference between the means by an estimate of the standard deviation. In other words, we’re looking to calculate something along the lines of this: and he suggested a rough guide for interpreting in Table 11.1. You’d think that this would be pretty unambiguous, but it’s not; largely because Cohen wasn’t too specific on what he thought should be used as the measure of the standard deviation. As discussed by McGrath & Meyer (2006), there are several different versions in common usage, and each author tends to adopt slightly different notation. Cohen’s is automatically produced as part of the output in CogStat whenever it makes sense.

| Cohen’s | Interpretation |

|---|---|

| about 0.2 | small effect |

| about 0.5 | moderate effect |

| about 0.8 | large effect |

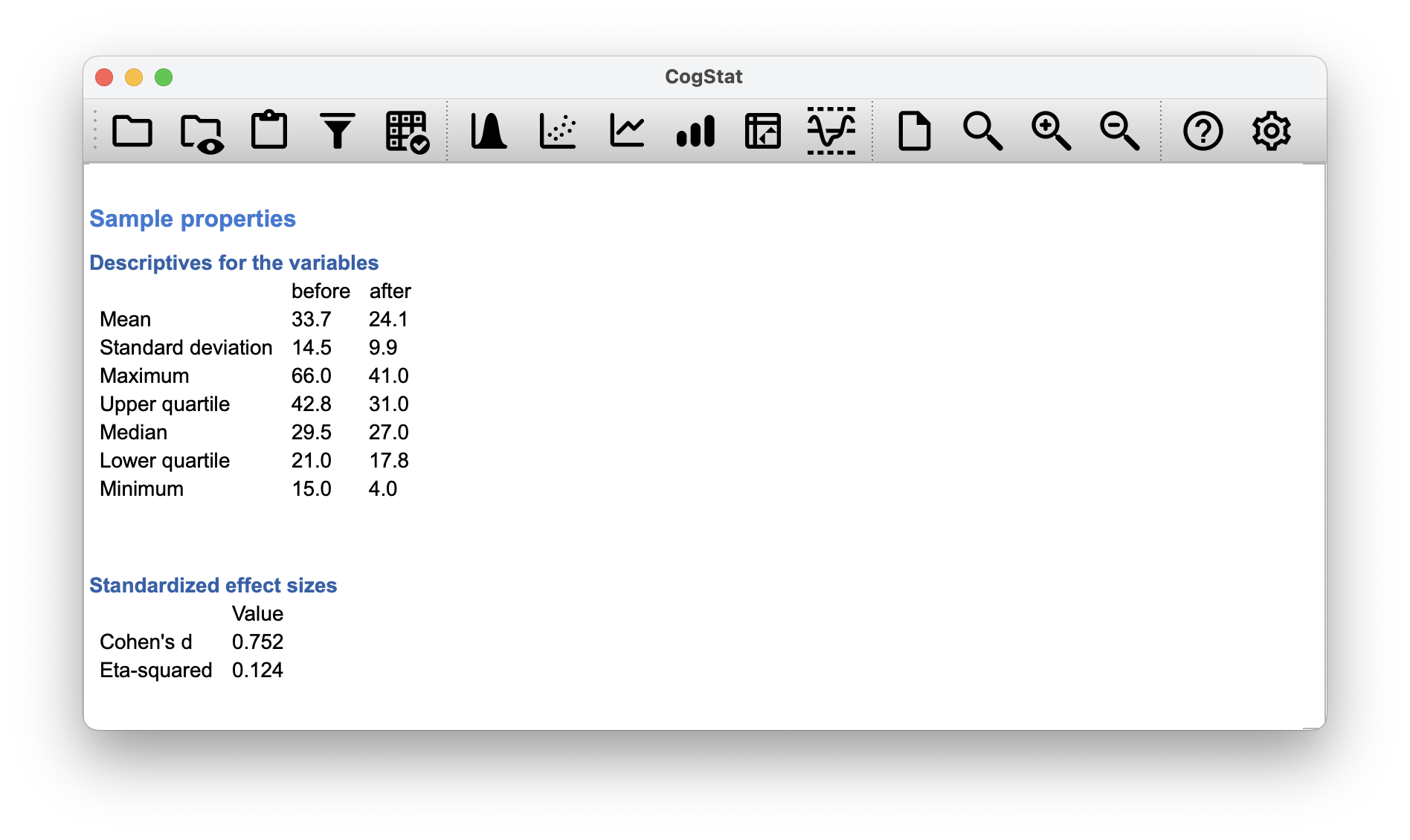

Let us go back to our examples and see how CogStat calculates effect sizes for us.

- One-sample -test: CogStat does not calculate Cohen’s in the Explore variable function for our zeppo.csv data set.

Regarding the other tests, the sample’s Standardised effect sizes will be shown right after the Descriptives for the variables subsection of the result set within the Sample properties section.

- Independent samples -test (Student’s): CogStat calculates Cohen’s in the

Compare groupsfunction for ourharpo.csvdata set. The result is . CogStat also calculates Eta-squared (), which is used for ANOVA, and we’ll discuss it in Chapter 12. - Independent samples -test (Welch): CogStat calculates Cohen’s in the

Compare groupsfunction for ourharpo_welch.csvdata set. The result is Cohen’s , eta-squared = . - Paired samples -test: CogStat calculates Cohen’s in the

Compare repeated measures variablesfunction for ourchico.csvdata set. The result is Cohen’s , eta-squared = .

11.7 Normality of a sample

All of the tests that we have discussed so far in this chapter have assumed that the data are normally distributed. This assumption is often quite reasonable because the central limit theorem (Section 8.3.3) does tend to ensure that many real-world quantities are normally distributed. Any time you suspect your variable is actually an average of lots of different things, there’s a good chance that it will be normally distributed; or at least close enough to normal that you can get away with using -tests.

However, there are lots of ways in which you can end up with variables that are highly non-normal. For example, any time you think that your variable is actually the minimum of lots of different things, there’s a very good chance it will end up quite skewed. In psychology, response time (RT) data is a good example of this. If you suppose that there are lots of things that could trigger a response from a human participant, then the actual response will occur the first time one of these trigger events occurs.53 This means that RT data are systematically non-normal.

How can we check the normality of a sample?

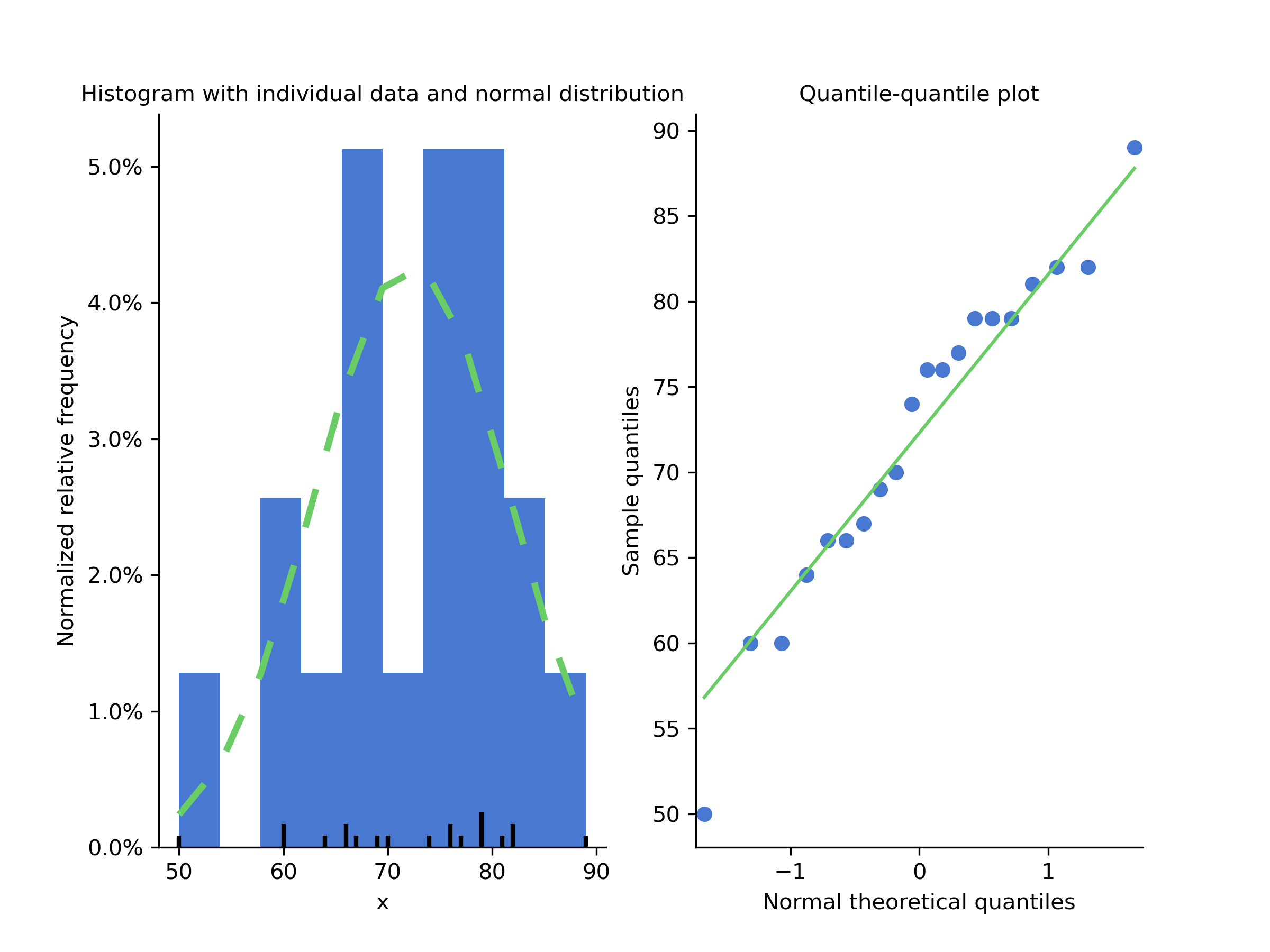

You might recall seeing this earlier in this chapter, but let’s take another look at a histogram and QQ plot without going back to the beginning.

Figure 11.15: Left: a histogram of the data points (observations) in the zeppo.csv data set with a green dashed line showing the normal distribution curve. Right: a QQ plot of the same.

One way to check whether a sample violates the normality assumption is to draw a “quantile-quantile” plot (QQ plot). This allows you to visually check whether you see any systematic violations. In a QQ plot, each observation is plotted as a single dot. The x coordinate is the theoretical quantile that the observation should fall in if the data were normally distributed (with mean and variance estimated from the sample), and the y coordinate is the actual quantile of the data within the sample (i.e. sample quantile). If the data are normal, the dots should form a straight line, or rather, should fit onto the green line more-or-less seamlessly.

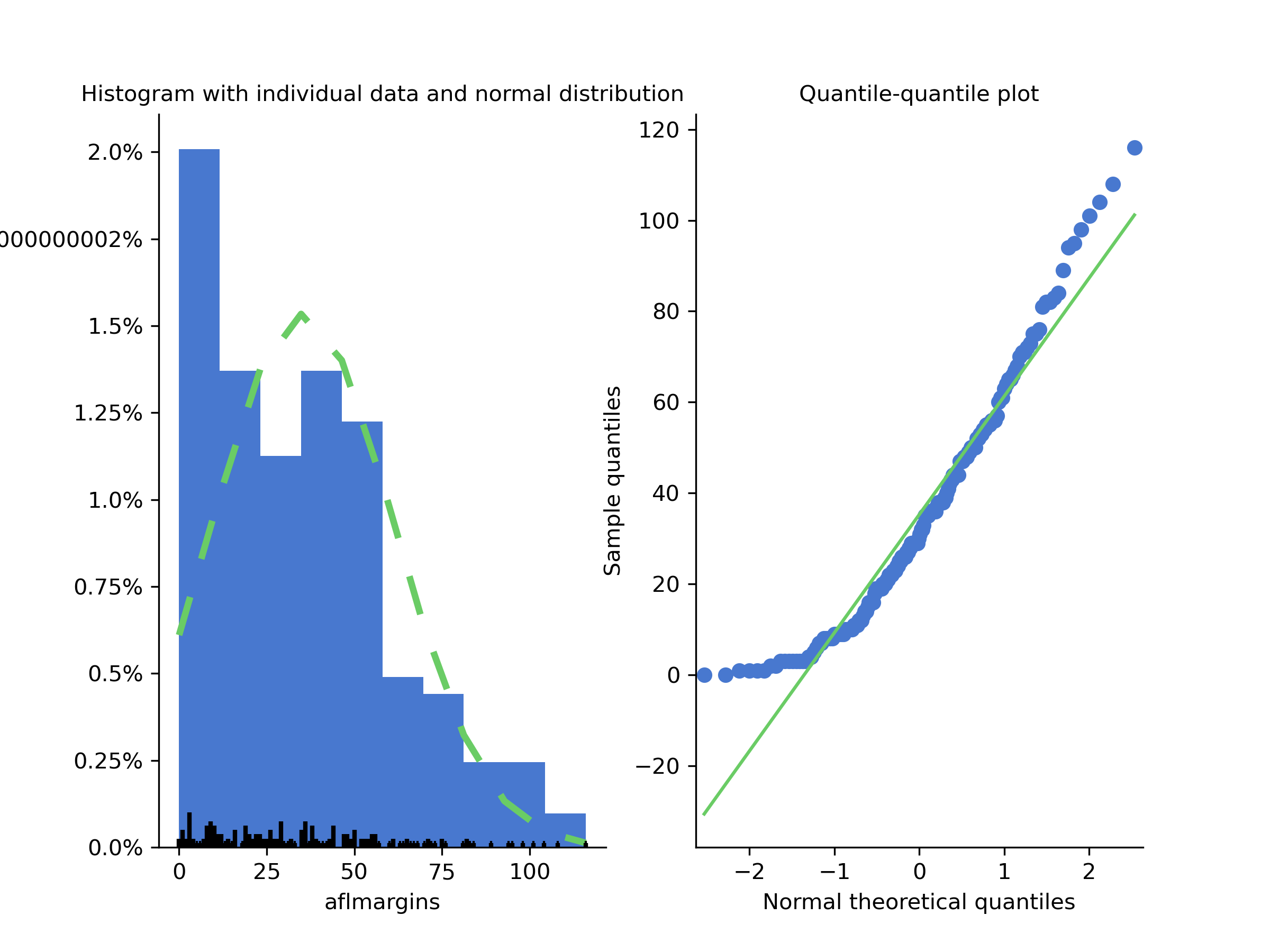

Now, let’s see how the QQ plot would look like, if we had a data set that violates the assumption of normality. You can test for yourself on the aflsmall data set from earlier.

Figure 11.16: Left: a histogram of the data points (observations) in the aflsmall.csv data set with a green dashed line showing the normal distribution curve. Right: a QQ plot of the same showing how the data points curve and don’t form a stright line.

Although QQ plots provide a simple visual way to check the normality of your data informally, you’ll want to do a formal test to prove it. The Shapiro-Wilk test (Shapiro & Wilk, 1965) is what you’re looking for.54

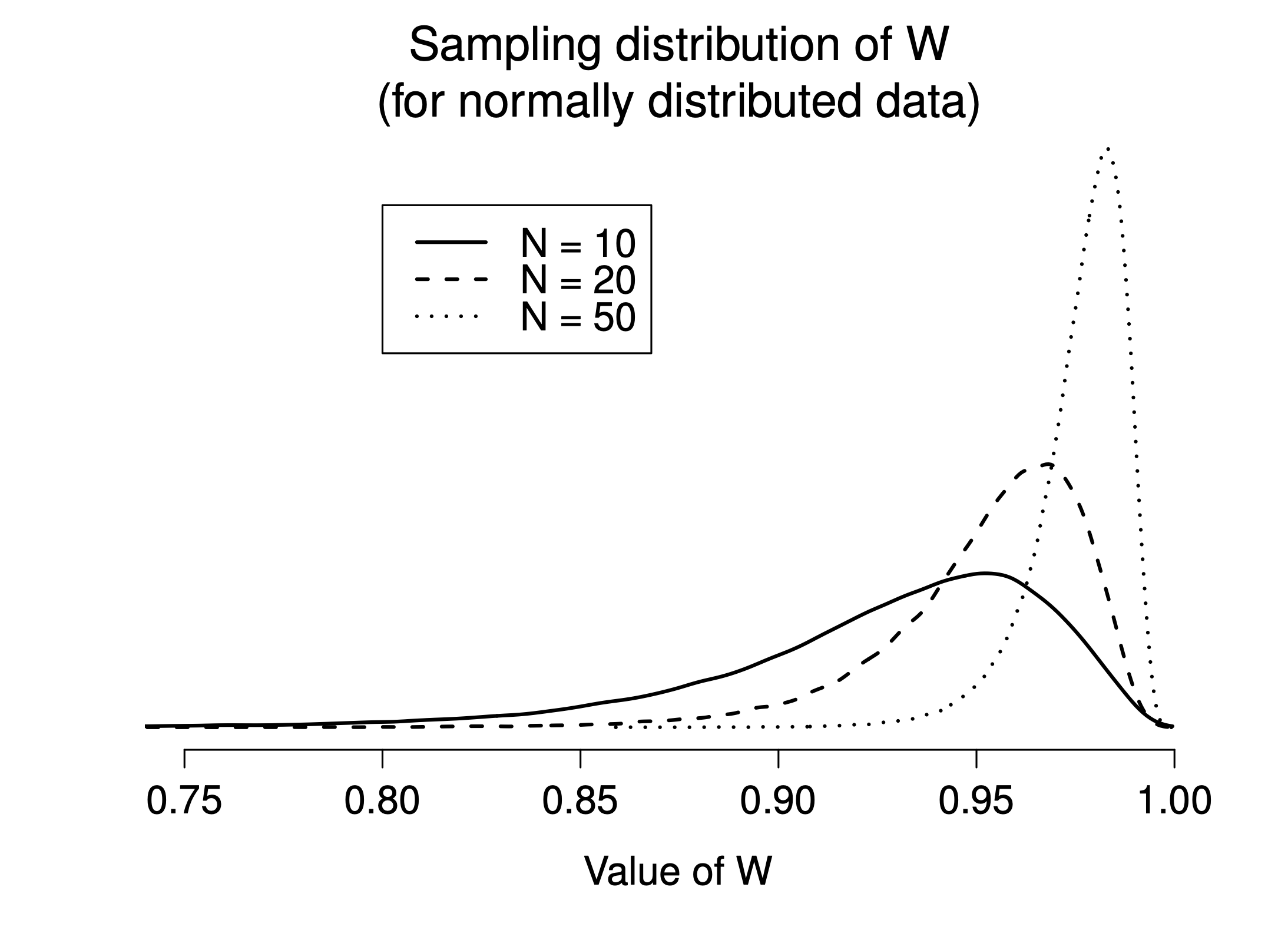

As you’d expect, the null hypothesis being tested is that a set of observations is normally distributed. The test statistic it calculates is conventionally denoted as , calculated as follows. First, we sort the observations in order of increasing size and let be the smallest value in the sample, be the second smallest and so on. Then the value of is given by where is the mean of the observations, and the values are something complicated beyond the scope of an introductory text.

Unlike most of the test statistics, if the -value of a Shapiro-Wilk test is less than , then you can conclude that the data are not normally distributed. If the -value is greater than , then you can conclude that the data are normally distributed.55

The sampling distribution for – which is not one of the standard ones discussed in Chapter 7 – depends on the sample size . To give you a feel for what these sampling distributions look like, we’ve plotted three of them in Figure 11.17. Notice that, as the sample size starts to get large, the sampling distribution becomes very tightly clumped up near , and as a consequence, for larger samples, doesn’t have to be much smaller than 1 for the test to be significant.

Figure 11.17: Sampling distribution of the Shapiro-Wilk statistic, under the null hypothesis that the data are normally distributed, for samples of size 10, 20 and 50. Note that small values of indicate departure from normality.

When reporting the results for a Shapiro-Wilk test, you should make sure to include the test statistic and the value, though given that the sampling distribution depends so heavily on it would probably be a politeness to include as well.

11.8 Testing non-normal data with Wilcoxon tests

Suppose your data is non-normal, but you still want to run something like a -test. Remember our aflsmall data set which violates the assumption of normality. When exploring the variable, CogStat will state that Normality is violated. The statistics are also given: Shapiro–Wilk normality test in variable aflmargins: W = 0.94, p < .001.

This is the situation where you want to use Wilcoxon tests. Unlike the -test, the Wilcoxon test doesn’t assume normality. In fact, they don’t make any assumptions about what kind of distribution is involved: in statistical jargon; this makes them nonparametric tests. While avoiding the normality assumption is nice, there’s a drawback: the Wilcoxon test is usually less powerful than the -test (i.e., higher Type II error rate).

Like the -test, the Wilcoxon test comes in two forms, one-sample and two-sample, and they’re used in more or less the exact same situations as the corresponding -tests.

11.8.1 Two-sample Wilcoxon test (Mann-Whitney test)

Let’s start with the two-sample Wilcoxon test, also known as the Mann-Whitney test, since it’s simpler than the one-sample version.

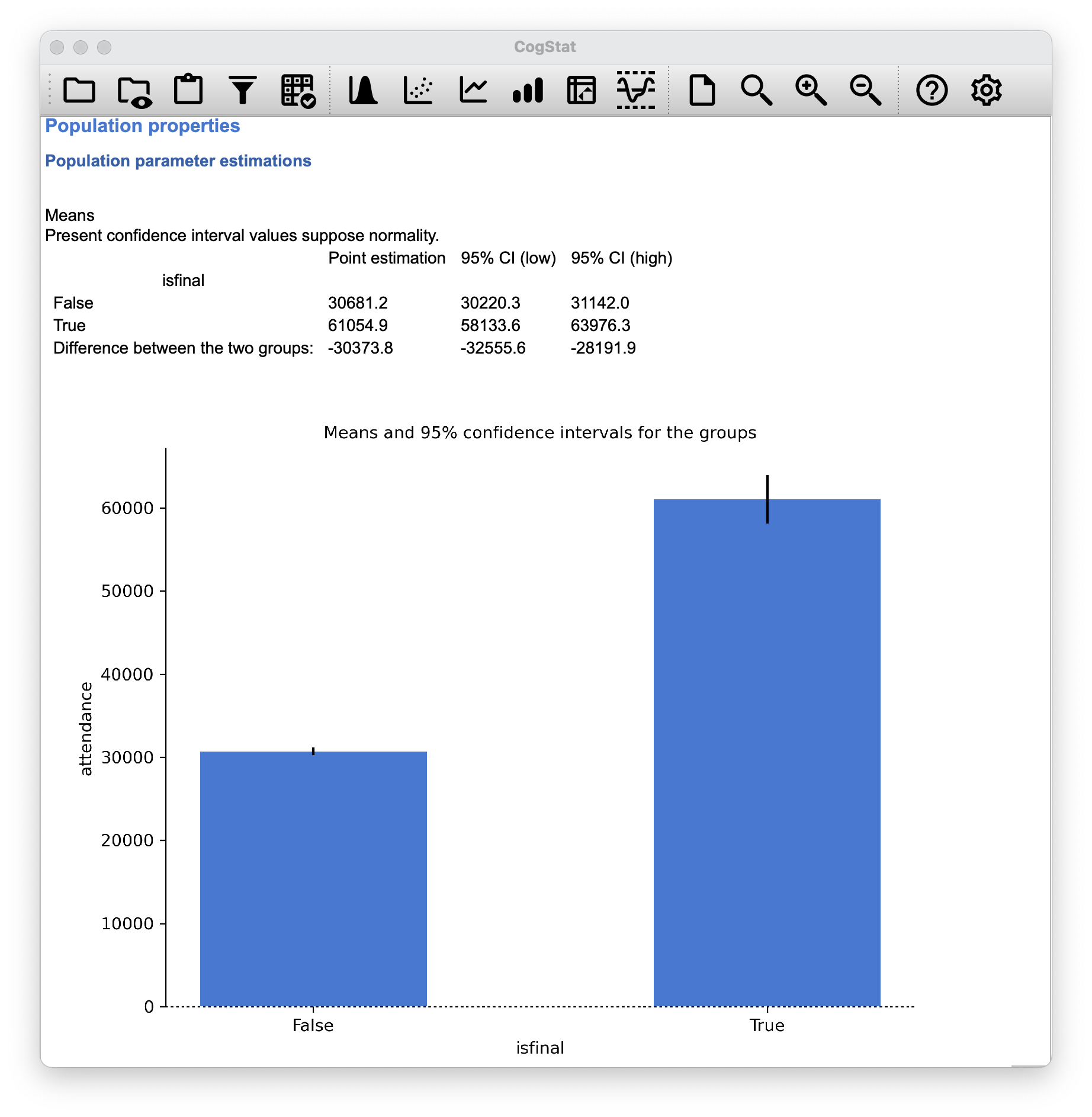

Let us recall our AFL example with the data set called afl24.csv. We want to see if there is any statistically significant difference in the attendance if the game is a final or not (boolean variable name: isfinal).

Figure 11.18: AFL final and non-final attendance (Compare groups function used).

Once we ran the Compare groups function, the results are given.

Our sample size is for non-final games, and for the final games. The Mann-Whitney test is a non-parametric test, so we don’t need to worry about the normality assumption.

Hypothesis tests

Testing if the means are the same.

One grouping variable. Two groups. Interval variable. >> Choosing two sample t-test, Mann-Whitney test or Welch's t-test depending on assumptions.

Checking for normality.

Shapiro-Wilk normality test in variable attendance (isfinal: False): W = 0.94, p < .001

Shapiro-Wilk normality test in variable attendance (isfinal: True): W = 0.97, p < .001

Checking for homogeneity of variance across groups.

Levene test: W = 76.47, p < .001

Normality is violated in variable attendance, group(s) ('False',), ('True',). >> Running Mann-Whitney test.

Result of independent samples Mann-Whitney rank test: U = 97189.00 p < .001

What does this score mean? Suppose we construct a table that compares every observation in group isfinal:True against every observation in group isfinal:False. Whenever the group isfinal:True attendance data is larger, we place a check mark in the table. We then count up the number of checkmarks. This is our test statistic, .

But how do we interpret this data? Well, we already see from the chart in Figure 11.18 that the median attendance for the final games is higher than the median attendance for the non-final games. Our statistic is also a positive number (from isfinal:False > isfinal:True), the value is below our level of 0.05. This means that we can reject the null hypothesis that the two groups have the same median attendance. In other words, we can conclude that the final games have a statistically significant higher attendance.

When quoting the Mann-Whitney test, you should report the statistic and the value.

11.8.2 One-sample and paired samples Wilcoxon tests

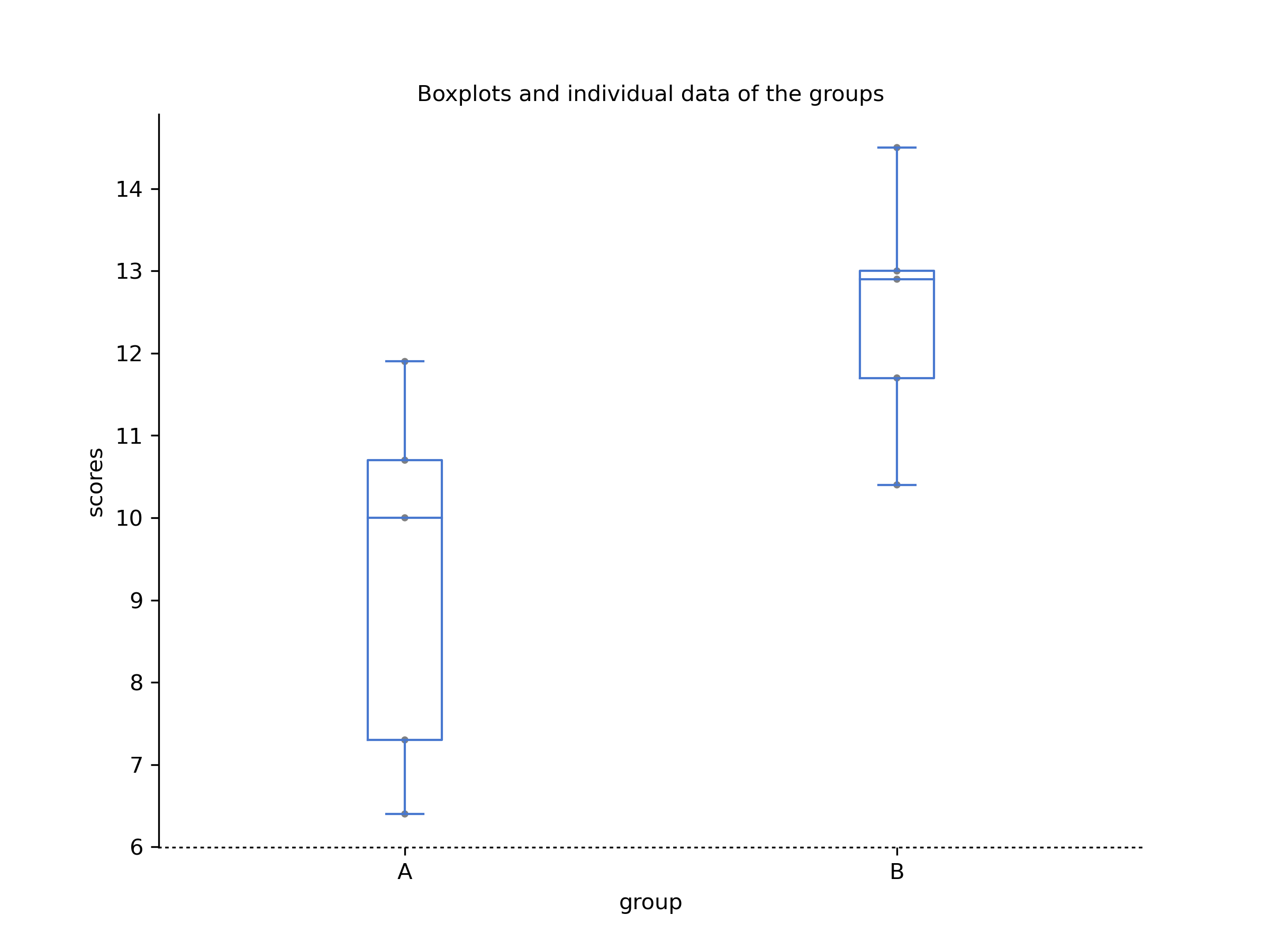

What about the one-sample Wilcoxon test, or equivalently, the paired samples Wilcoxon test? Suppose we’re interested in finding out whether taking a biology class has any effect on the happiness of students. The data is stored in the happiness.csv file.

What is measured here is the happiness of each student before taking the class and after taking the class. The change score is the difference between the two. There’s no fundamental difference between doing a paired-samples test using before and after, versus doing a one-sample test using the change scores.

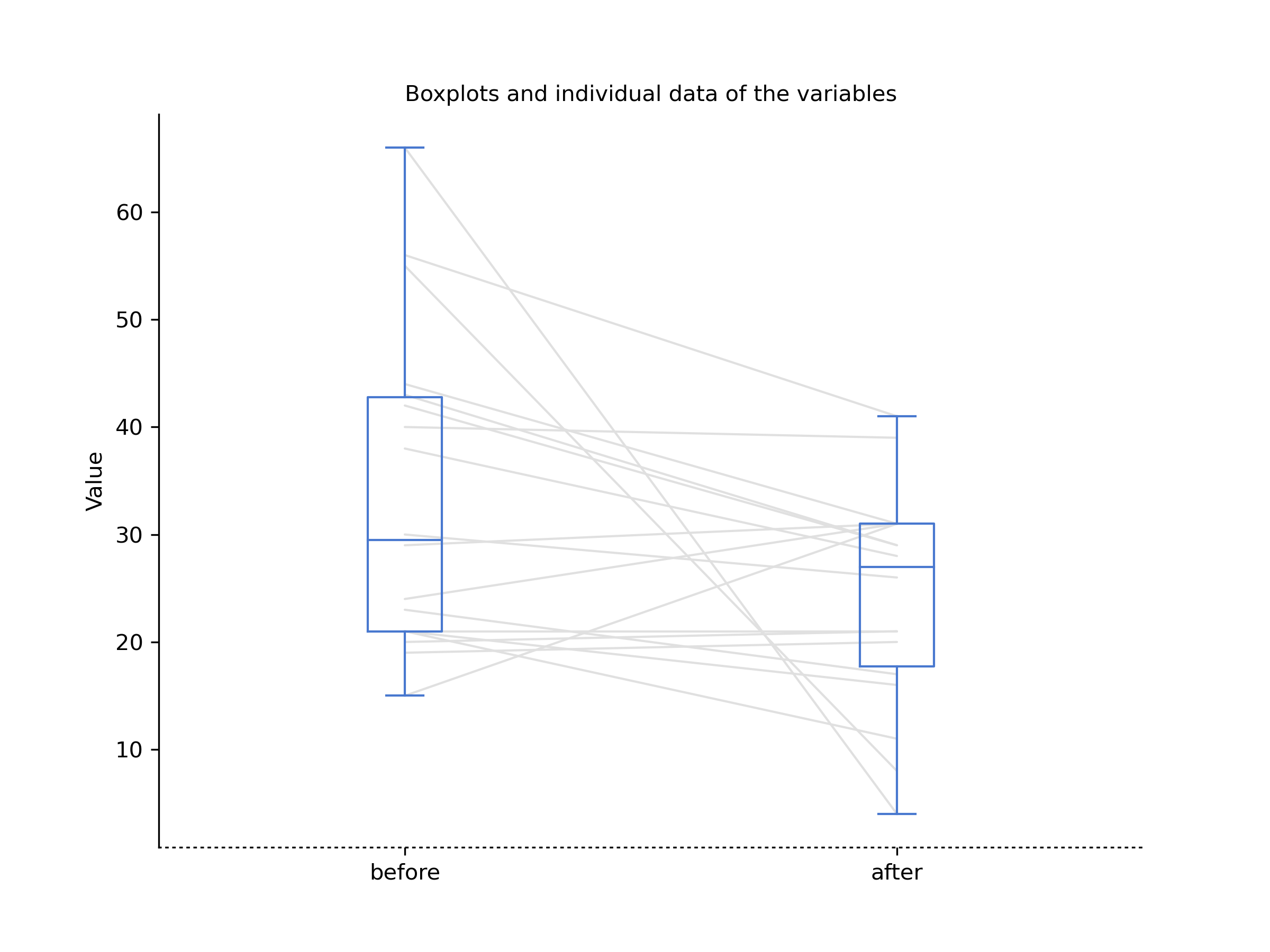

Let us run a paired sample Wilcoxon test first in CogStat. Let’s use the Compare repeat measures variables function with the before and after variables.

Figure 11.19: Happiness before and after statistics class (Compare repeat measures variables function used).

Let’s see the results:

Hypothesis tests

Testing if the means are the same.

Two variables. Interval variables. >> Choosing paired t-test or paired Wilcoxon test depending on the assumptions.

Checking for normality.

Shapiro-Wilk normality test in variable Difference of before and after: W = 0.80, p = .002

Normality is violated in variable(s) Difference of before and after. >> Running paired Wilcoxon test.

Result of Wilcoxon signed-rank test: T = 31.00 p = .031

The value is 0.031, which is below our level of 0.05. This means that we can reject the null hypothesis that the two groups have the same median happiness. In other words, we can conclude that taking the biology class has a statistically significant negative effect on the happiness of students. Why negative? While the T value is positive, remember the rule, you have to plot your raw data and look at the descriptives as well, if something doesn’t look right. And as you’ve seen, the median happiness score for the before group is higher than the median happiness score for the after group.

Now, you can make sure by running a one-sample Wilcoxon test in CogStat. Let’s use the Explore variable function with the change variable. This will serve two purposes. You’ll see how the one-sample Wilcoxon test works, and you’ll also see how the one-sample and two-sample tests relate to each other.

You’ll quickly see in the descriptives, that the Mean change is negative () with a 95% confidence interval of to being both in the negative.

Hypothesis tests

Testing if mean deviates from the value 0.

Interval variable. >> Choosing one-sample t-test or Wilcoxon signed-rank test depending on the assumption.

Checking for normality.

Shapiro-Wilk normality test in variable change: W = 0.81, p = .002

Normality is violated. >> Running Wilcoxon signed-rank test.

Median: -5.5

Result of Wilcoxon signed-rank test: T = 31.50 p = .035

As this shows, we have a significant effect, and of the same size as the paired samples test. (Don’t mind the rounding difference in the T value.)

11.9 Summary

- A one-sample -test is used to compare a single sample mean against a hypothesised value for the population mean. (Section 11.2)

- An independent samples -test is used to compare the means of two groups, and tests the null hypothesis that they have the same mean. It comes in two forms: the Student test (Section 11.3 assumes that the groups have the same standard deviation, the Welch test (Section 11.4) does not.

- A paired samples -test is used when you have two scores from each person, and you want to test the null hypothesis that the two scores have the same mean. It is equivalent to taking the difference between the two scores for each person, and then running a one-sample -test on the difference scores. (Section 11.5)

- Effect size calculations for the difference between means can be calculated via the Cohen’s statistic. (Section 11.6).

- You can check the normality of a sample using QQ plots and the Shapiro-Wilk test. (Section 11.7)

- If your data are non-normal, you can use Wilcoxon and Mann-Whitney tests instead of -tests. (Section 11.8)

References

Strictly speaking, the test only requires that the sampling distribution of the mean be normally distributed; if the population is normal then it necessarily follows that the sampling distribution of the mean is also normal. However, as we saw when talking about the central limit theorem, it’s entirely possible (even commonplace) for the sampling distribution to be normal even if the population distribution itself is non-normal. However, in light of the sheer ridiculousness of the assumption that the true standard deviation is known, there really isn’t much point in going into details on this front!↩︎

Gosset only provided a partial solution: the general solution to the problem was provided by Sir Ronald Fisher.↩︎

A funny question almost always pops up at this point: what the heck is the population being referred to in this case? Is it the set of students actually taking Dr Harpo’s class (all 33 of them)? The set of people who might take the class (an unknown number) of them? Or something else? Does it matter which of these we pick? Technically yes, it does matter: if you change your definition of what the “real world” population actually is, then the sampling distribution of your observed mean changes too. The -test relies on the assumption that the observations are sampled at random from an infinitely large population, and to the extent that real life isn’t like that, then the -test can be wrong. In practice, however, this isn’t usually a big deal: even though the assumption is almost always wrong, it doesn’t lead to a lot of pathological behaviour from the test, so we tend to just ignore it.↩︎

This design is very similar to the one in Chapter 10.7 that motivated the McNemar test. This should be no surprise. Both are standard repeated measures designs involving two measurements. The only difference is that this time our outcome variable is interval scale (working memory capacity) rather than a binary, nominal scale variable (a yes-or-no question).↩︎

This is a massive oversimplification.↩︎

The Kolmogorov-Smirnov test is probably more traditional than the Shapiro-Wilk, though mostly you’ll encounter Shapiro-Wilk in psychology research.↩︎

This is the opposite of what you’d expect from a -value, but it’s the way it is.↩︎